OpenAI Launches “Workspace Agents” to Industrialize Corporate Labor

The post OpenAI Launches “Workspace Agents” to Industrialize Corporate Labor appeared first on Daily CyberSecurity.

The post OpenAI Launches “Workspace Agents” to Industrialize Corporate Labor appeared first on Daily CyberSecurity.

![]()

![]()

kali-undercover --backtrackThe mode can be toggled off by executing the same command again, restoring the default Kali interface. This addition blends nostalgia with functionality, allowing long-time users to revisit the environment that laid the groundwork for modern penetration testing distributions.

By 2026, AI agent sprawl has become a critical SaaS security risk. With 80% of organizations reporting unintended agent actions, the "visibility gap" is the new frontier for cyber threats. Learn how to govern autonomous agents using comprehensive inventories, permission mapping, and automated risk scoring.

The post Tackling the Uncontrolled Growth of AI Agents in Modern SaaS Environments appeared first on Security Boulevard.

In the evolving digital economy, adopting a prevention-first strategy for cloud workflows is essential. This article explores the importance of preemptive security measures to protect sensitive operations from breaches, detailing steps for organizations to enhance their security posture.

The post Prevention is the Only Cloud Security Strategy That Works appeared first on Security Boulevard.

As AI adoption accelerates, organizations must evolve their security strategies from prompt filtering to comprehensive behavioral monitoring. This shift is critical to safeguarding against adaptive threats and ensuring safe AI deployment in production environments.

The post The Attack Chain Your AI System is Already Missing appeared first on Security Boulevard.

Cloudflare plays a significant role in supporting the Internet’s infrastructure. As a reverse proxy by approximately 20% of all websites, we sit directly in the request path between users and the origin, helping to improve performance, security, and reliability at scale. Beyond that, our global network powers services like delivery, Workers, and R2 — making Cloudflare not just a passive intermediary, but an active platform for delivering and hosting content across the Internet.

Since Cloudflare’s launch in 2010, we have collaborated with the National Center for Missing and Exploited Children (NCMEC), a US-based clearinghouse for reporting child sexual abuse material (CSAM), and are committed to doing what we can to support identification and removal of CSAM content.

Members of the public, customers, and trusted organizations can submit reports of abuse observed on Cloudflare’s network. A minority of these reports relate to CSAM, which are triaged with the highest priority by Cloudflare’s Trust & Safety team. We will also forward details of the report, along with relevant files (where applicable) and supplemental information to NCMEC.

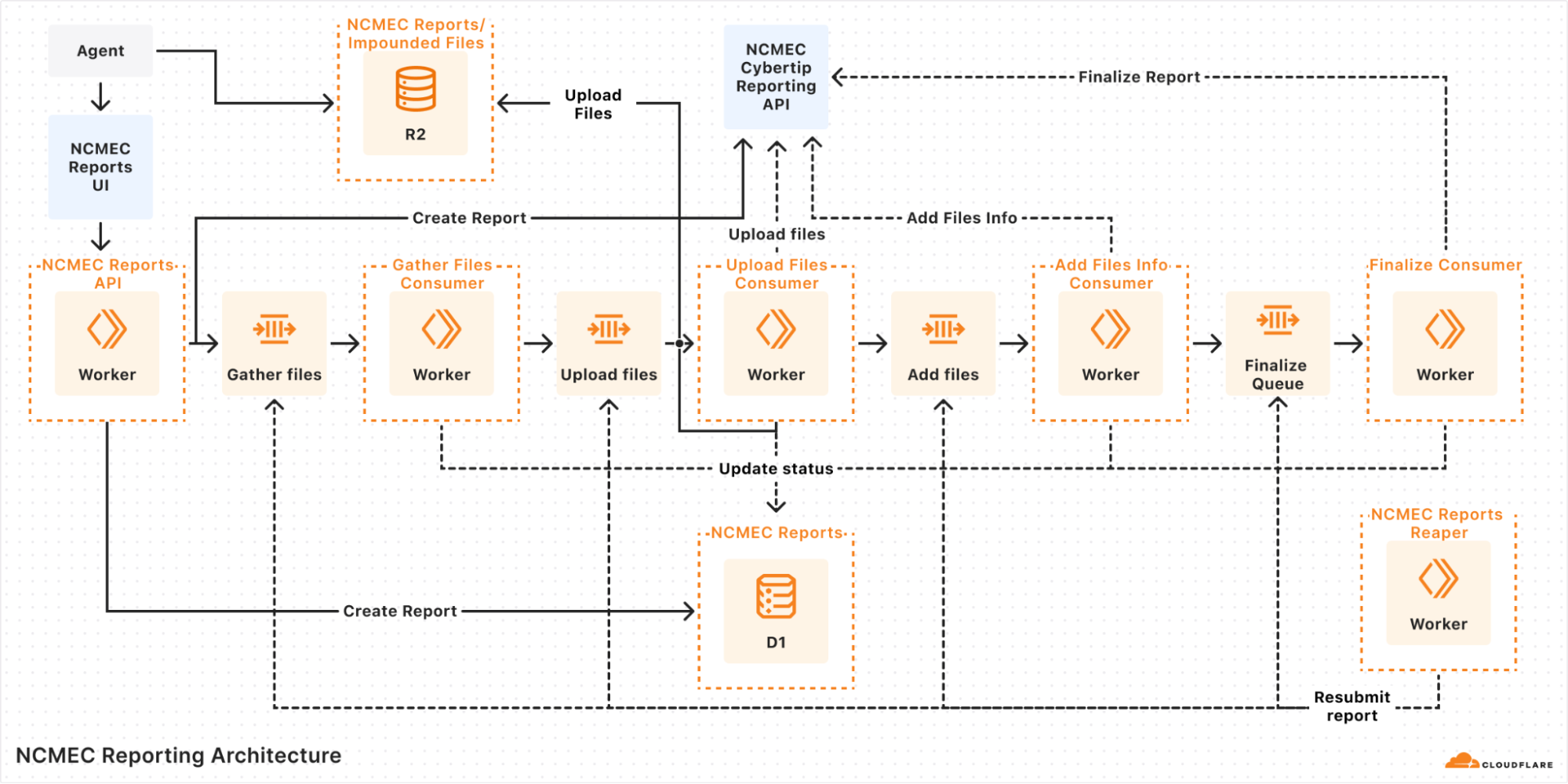

The process to generate and submit reports to NCMEC involves multiple steps, dependencies, and error handling, which quickly became complex under our original queue-based architecture. In this blog post, we discuss how Cloudflare Workflows helped streamline this process and simplify the code behind it.

When we designed our latest NCMEC reporting system in early 2024, Cloudflare Workflows did not exist yet. We used the Workers platform Queues as a solution for managing asynchronous tasks, and structured our system around them.

Our goal was to ensure reliability, fault tolerance, and automatic retries. However, without an orchestrator, we had to manually handle state, retries, and inter-queue messaging. While Queues worked, we needed something more explicit to help debug and observe the more complex asynchronous workflows we were building on top of the messaging system that Queues gave us.

In our queue-based architecture each report would go through multiple steps:

Validate input: Ensure the report has all necessary details.

Initiate report: Call the NCMEC API to create a report.

Fetch impounded files (if applicable): Retrieve files stored in R2.

Upload files: Send files to NCMEC via API.

Finalize report: Mark the report as completed.

A diagram of our queue-based architecture

Each of these steps was handled by a separate queue, and if an error occurred, the system would retry the message several times before marking the report as failed. But errors weren’t always straightforward — for instance, if an external API call consistently failed due to bad input or returned an unexpected response shape, retries wouldn’t help. In those cases, the report could get stuck in an intermediate state, and we’d often have to manually dig through logs across different queues to figure out what went wrong.

Even more frustrating, when handling failed reports, we relied on a "Reaper" — a cron job that ran every hour to resubmit failed reports. Since a report could fail at any step, the Reaper had to deduce which queue failed and send a message to begin reprocessing. This meant:

Debugging was a nightmare: Tracing the journey of a single report meant jumping between logs for multiple queues.

Retries were unreliable: Some queues had retry logic, while others relied on the Reaper, leading to inconsistencies.

State management was painful: We had no clear way to track whether a report was halfway through the pipeline or completely lost, except by looking through the logs.

Operational overhead was high: Developers frequently had to manually inspect failed reports and resubmit them.

Queues gave us a solid foundation for moving messages around, but it wasn’t meant to handle orchestration. What we’d really done was build a bunch of loosely connected steps on top of a message bus and hoped it would all hold together. It worked, for the most part, but it was clunky, hard to reason about, and easy to break. Just understanding how a single report moved through the system meant tracing messages across multiple queues and digging through logs.

We knew we needed something better: a way to define workflows explicitly, with clear visibility into where things were and what had failed. But back then, we didn’t have a good way to do that without bringing in heavyweight tools or writing a bunch of glue code ourselves. When Cloudflare Workflows came along, it felt like the missing piece, finally giving us a simple, reliable way to orchestrate everything without duct tape.

Once Cloudflare Workflows was announced, we saw an immediate opportunity to replace our queue-based architecture with a more structured, observable, and retryable system. Instead of relying on a web of multiple queues passing messages to each other, we now have a single workflow that orchestrates the entire process from start to finish. Critically, if any step failed, the Workflow could pick back up from where it left off, without having to repeat earlier processing steps, re-parsing files, or duplicating uploads.

With Cloudflare Workflows, each report follows a clear sequence of steps:

Creating the report: The system validates the incoming report and initiates it with NCMEC.

Checking for impounded files: If there are impounded files associated with the report, the workflow proceeds to file collection.

Gathering files: The system retrieves impounded files stored in R2 and prepares them for upload.

Uploading files to NCMEC: Each file is uploaded to NCMEC using their API, ensuring all relevant evidence is submitted.

Adding file metadata: Metadata about the uploaded files (hashes, timestamps, etc.) is attached to the report.

Finalizing the report: Once all files are processed, the report is finalized and marked as complete.

Here’s a simplified version of the orchestrator:

import { WorkflowEntrypoint, WorkflowEvent, WorkflowStep } from 'cloudflare:workers';

export class ReportWorkflow extends WorkflowEntrypoint<Env, ReportType> {

async run(event: WorkflowEvent<ReportType>, step: WorkflowStep) {

const reportToCreate: ReportType = event.payload;

let reportId: number | undefined;

try {

await step.do('Create Report', async () => {

const createdReport = await createReportStep(reportToCreate, this.env);

reportId = createdReport?.id;

});

if (reportToCreate.hasImpoundedFiles) {

await step.do('Gather Files', async () => {

if (!reportId) throw new Error('Report ID is undefined.');

await gatherFilesStep(reportId, this.env);

});

await step.do('Upload Files', async () => {

if (!reportId) throw new Error('Report ID is undefined.');

await uploadFilesStep(reportId, this.env);

});

await step.do('Add File Metadata', async () => {

if (!reportId) throw new Error('Report ID is undefined.');

await addFilesInfoStep(reportId, this.env);

});

}

await step.do('Finalize Report', async () => {

if (!reportId) throw new Error('Report ID is undefined.');

await finalizeReportStep(reportId, this.env);

});

} catch (error) {

console.error(error);

throw error;

}

}

}Not only can tasks be broken into discrete steps, but the Workflows dashboard gives us real-time visibility into each report processed and the status of each step in the workflow!

This allows us to easily see active and completed workflows, identify which steps failed and where, and retry failed steps or terminate workflows. These features revolutionize how we troubleshoot issues, providing us with a tool to deep dive into any issues that arise and retry steps with a click of a button.

Below are two dashboard screenshots, one of our running workflows and the second of an inspection of the success and failures of each step in the workflow. Some workflows look slower or “stuck” — that’s because failed steps are retried with exponential backoff. This helps smooth over transient issues like flaky APIs without manual intervention.

Cloudflare Workflows Dashboard for our NCMEC Workflow

Cloudflare Workflows Dashboard containing a breakout of the NCMEC Workflow Steps

Cloudflare Workflows transformed how we handle NCMEC incident reports. What was once a complex, queue-based architecture is now a structured, retryable, and observable process. Debugging is easier, error handling is more robust, and monitoring is seamless.

If you’re also building larger, multi-step applications, or have an existing Workers application that has started to approach what we ended up with for our incident reporting process, then you can typically wrap that code within a Workflow with minimal changes. Workflows can read from R2, write to KV, query D1 and call other APIs just like any other Worker, but are designed to help orchestrate asynchronous, long-running tasks.

To get started with Workflows, you can head to the Workflows developer documentation and/or pull down the starter project and dive into the code immediately:

$ npm create cloudflare@latest workflows-starter --

--template="cloudflare/workflows-starter"

Learn more about Cloudflare Workflows, and about using the Cloudflare CSAM Scanning Tool.