Active defense: introducing a stateful vulnerability scanner for APIs

Security is traditionally a game of defense. You build walls, set up gates, and write rules to block traffic that looks suspicious. For years, Cloudflare has been a leader in this space: our Application Security platform is designed to catch attacks in flight, dropping malicious requests at the edge before they ever reach your origin. But for API security, defensive posturing isn’t enough.

That’s why today, we are launching the beta of Cloudflare’s Web and API Vulnerability Scanner.

We are starting with the most pervasive and difficult-to-catch threat on the OWASP API Top 10: Broken Object Level Authorization, or BOLA. We will add more vulnerability scan types over time, including both API and web application threats.

The most dangerous API vulnerabilities today aren’t generic injection attacks or malformed requests that a WAF can easily spot. They are logic flaws—perfectly valid HTTP requests that meet the protocol and application spec but defy the business logic.

To find these, you can’t just wait for an attack. You have to actively hunt for them.

The Web and API Vulnerability Scanner will be available first for API Shield customers. Read on to learn why we are focused on API security scans for this first release.

In the web application world, vulnerabilities often look like syntax errors. A SQL injection attempt looks like code where data should be. A cross-site scripting (XSS) attack looks like a script tag in a form field. These have signatures.

API vulnerabilities are different. To illustrate, let’s imagine a food delivery mobile app that communicates solely with an API on the backend. Let’s take the orders endpoint:

Endpoint Definition: /api/v1/orders

Method | Resource Path | Description |

GET | /api/v1/orders/{order_id} | Check Status. Returns the tracking status of a specific order (e.g., "Kitchen is preparing"). |

PATCH | /api/v1/orders/{order_id} | Update Order. Allows the user to modify the drop-off location or add delivery instructions. |

In a broken authorization attack like BOLA, User A (the attacker) requests to update the delivery address of a paid-for order belonging to User B (the victim). The attacker simply inserts User B’s {order_id} in the PATCH request.

Here is what that request looks like, with ‘8821’ as User B’s order ID. Notice that User A is fully authenticated with their own valid token:

PATCH /api/v1/orders/8821 HTTP/1.1

Host: api.example.com

Authorization: Bearer <User_A_Valid_Token>

Content-Type: application/json

{

"delivery_address": "123 Attacker Way, Apt 4",

"instructions": "Leave at front door, ring bell"

}

The request headers are valid. The authentication token is valid. The schema is correct. To a standard WAF, this request looks perfect. A bot management offering may even be fooled if a human is manually sending the attack requests.

User A will now get B’s food delivered to them! The vulnerability exists because the API endpoint fails to validate if User A actually has permission to view or update user B’s data. This is a failure of logic, not syntax. To fix this, the API developer could implement a simple check: if (order.userID != user.ID) throw Unauthorized;

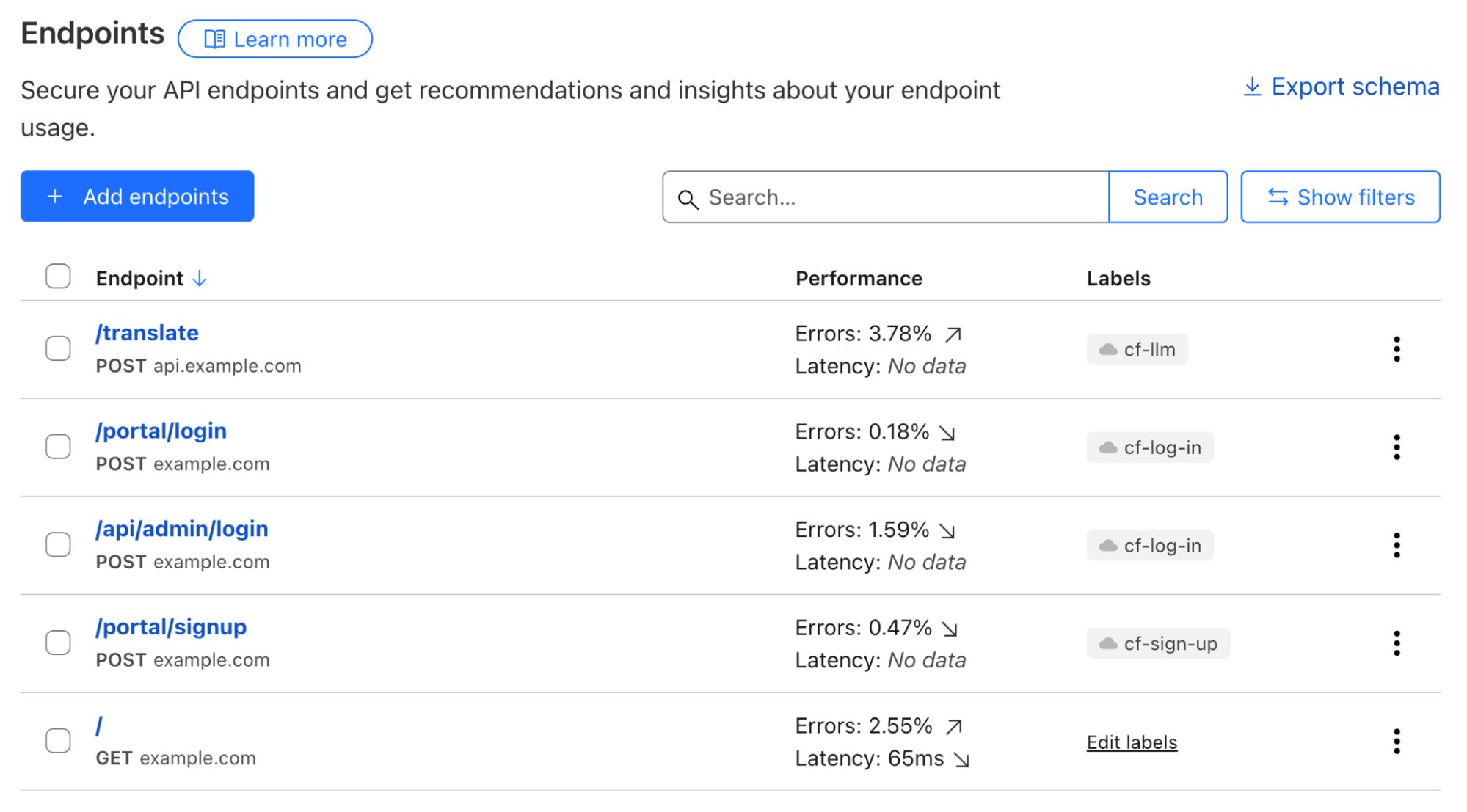

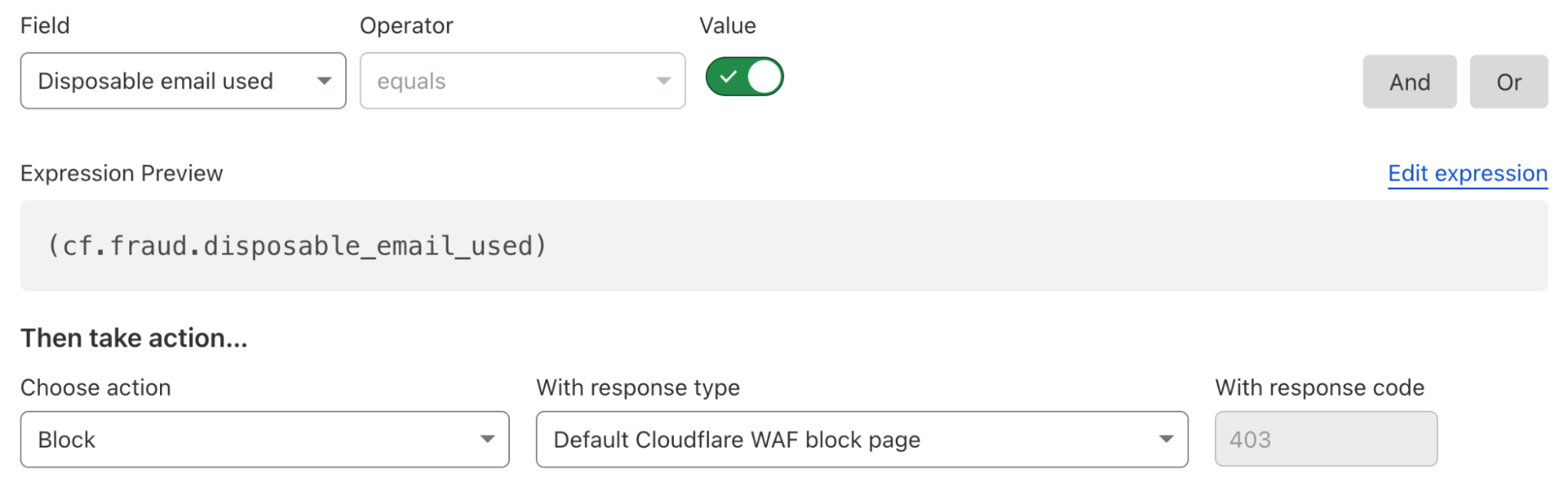

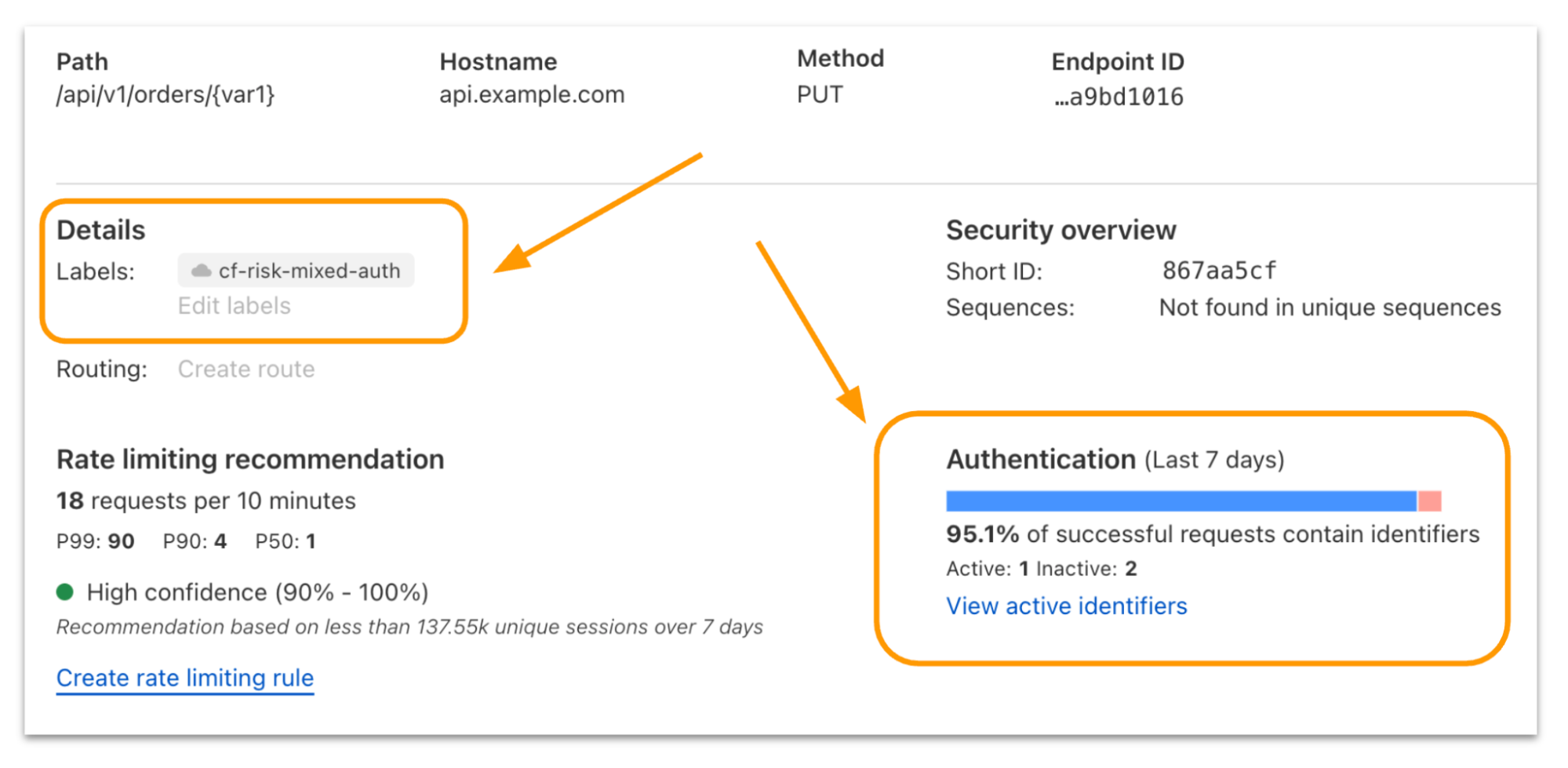

You can detect these types of vulnerabilities by actively sending API test traffic or passively listening to existing API traffic. Finding these vulnerabilities through passive scanning requires context. Last year we launched BOLA vulnerability detection for API Shield. This detection automatically finds these vulnerabilities by passively scanning customer traffic for usage anomalies. To be successful with this type of scanning, you need to know what a "valid" API call looks like, what the variable parameters are, how a typical user behaves, and how the API behaves when those parameters are manipulated.

Yet there are reasons security teams may not have any of that context, even with access to API Shield’s BOLA vulnerability detection. Development environments may need to be tested but lack user traffic. Production environments may (thankfully) have a lack of attack traffic yet still need analysis, and so on. In these circumstances, and to be proactive in general, teams can turn to Dynamic Application Security Testing (DAST). By creating net-new traffic profiles intended specifically for security testing, DAST tools can look for vulnerabilities in any environment at any time.

Unfortunately, traditional DAST tools have a high barrier to entry. They are often difficult to configure, require you to manually upload and maintain Swagger/OpenAPI files, struggle to authenticate correctly against modern complex login flows, and can simply lack any API-specific security tests (e.g. BOLA).

In the food delivery order example above, we assumed the attacker could find a valid order to modify. While there are often avenues for attackers to gather this type of intelligence in a live production environment, in a security testing exercise you must create your own objects before testing the API’s authorization controls. For typical DAST scans, this can be a problem, because many scanners treat each individual request on its own. This method fails to chain requests together in the logical pattern necessary to find broken authorization vulnerabilities. Legacy DAST scanners can also exist as an island within your security tooling and orchestration environment, preventing their findings from being shared or viewed in context.

Vulnerability scanning from Cloudflare is different for a few key reasons.

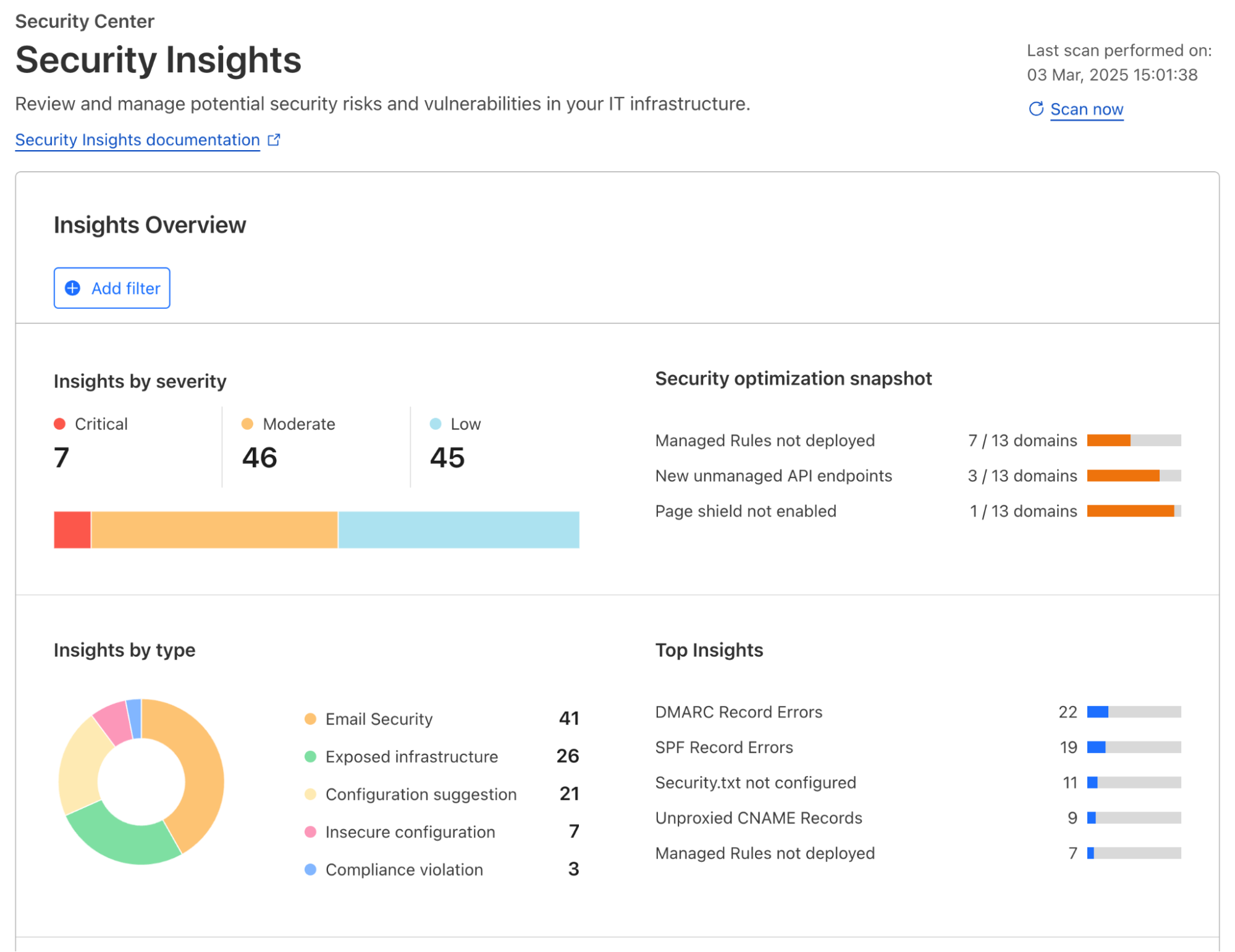

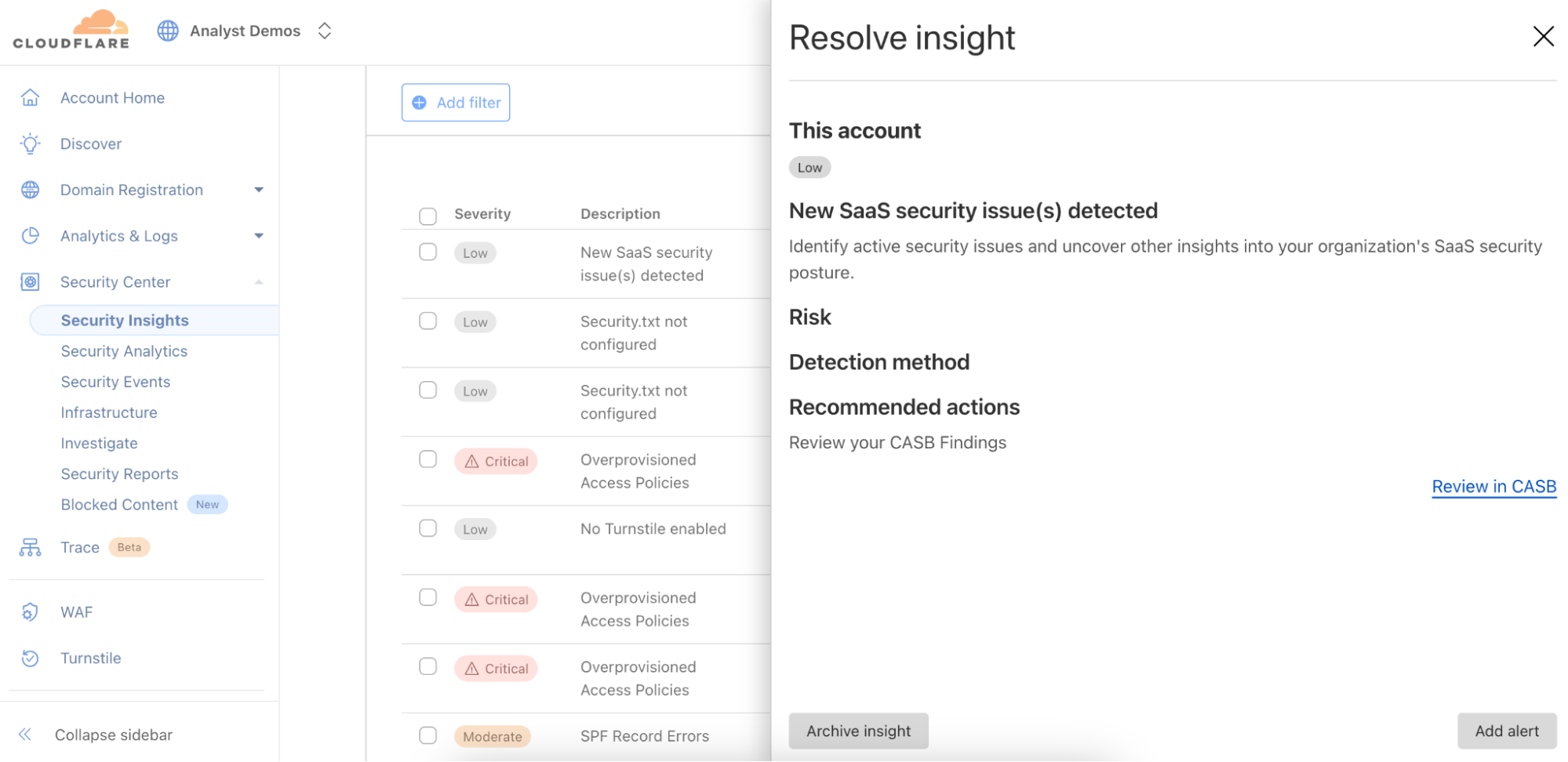

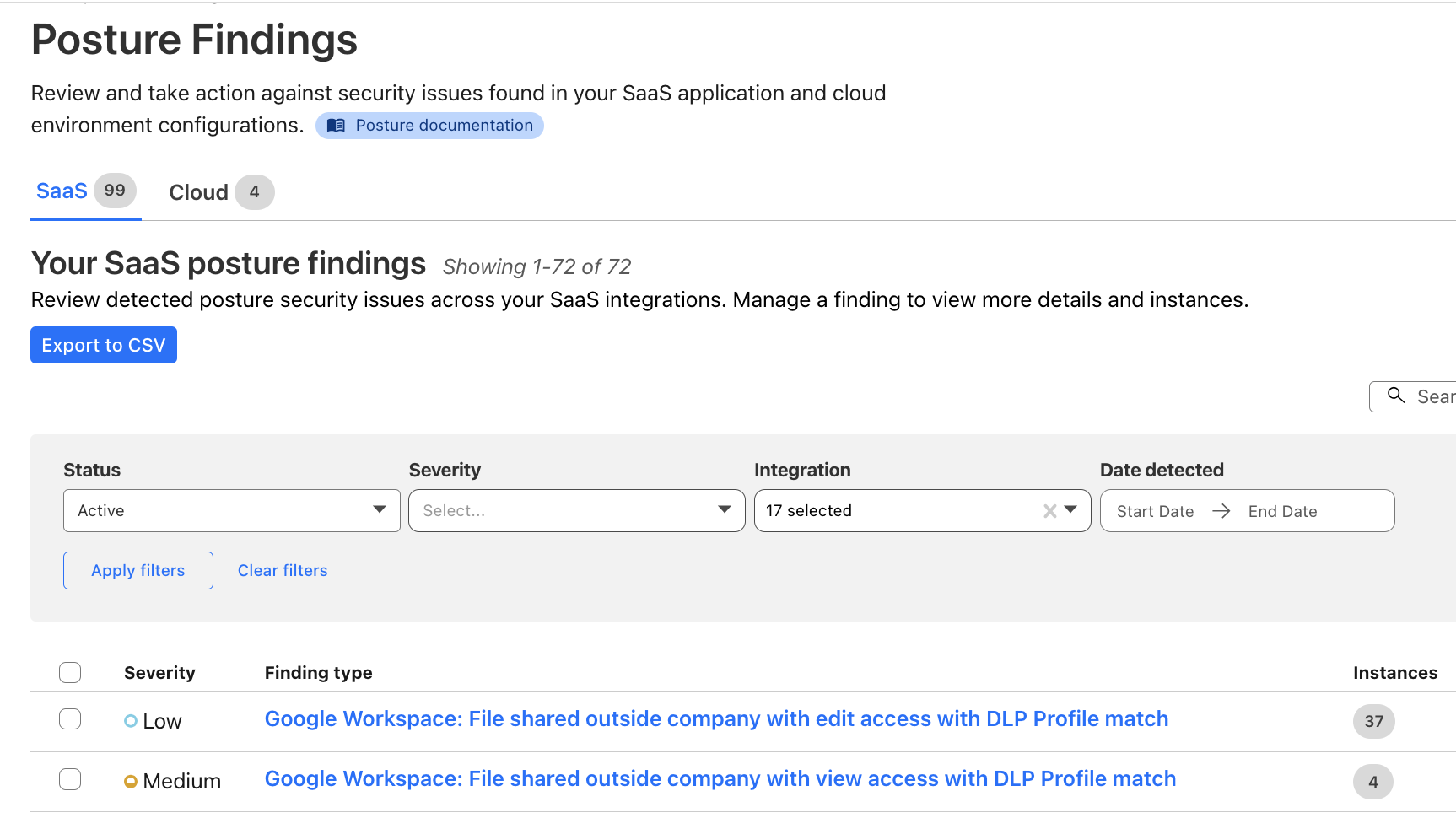

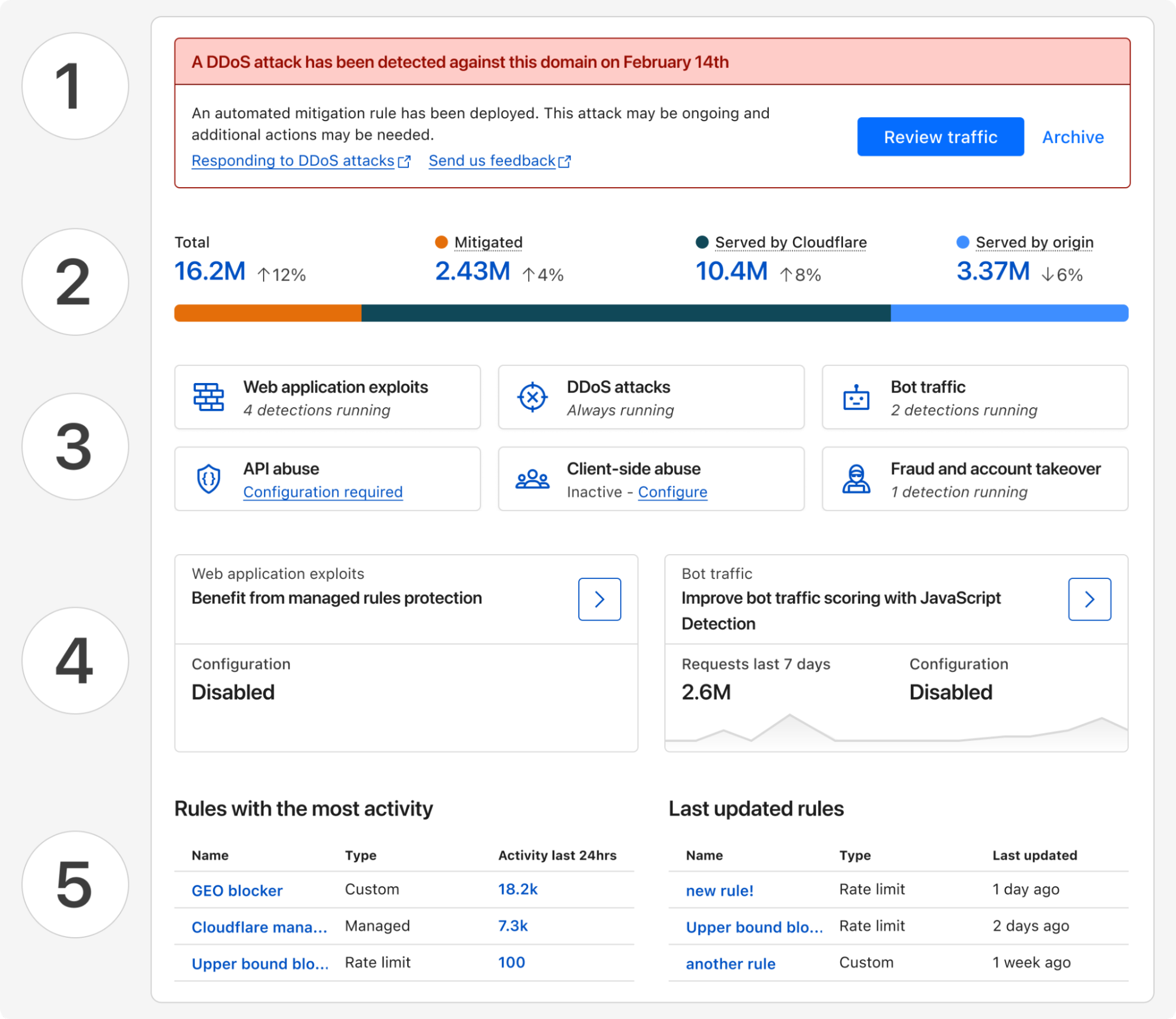

First, Security Insights will list results from our new scans alongside any existing Cloudflare security findings for added context. You’ll see all your posture management information in one place.

Second, we already know your API’s inputs and outputs. If you are an API Shield customer, Cloudflare already understands your API. Our API Discovery and Schema Learning features passively catalog your endpoints and learn your traffic patterns. While you’ll need to manually upload an OpenAPI spec to get started for our initial release, you will be able to get started quickly without one in a future release.

Third, because we sit at the edge, we can turn passive traffic inspection knowledge into active intelligence. It will be easy to verify BOLA vulnerability detection risks (found via traffic inspection) by sending net-new HTTP requests with the vulnerability scanner.

And finally, we have built a new, stateful DAST platform, as we detail below. Most scanners require hours of setup to "teach" the tool how to talk to your API. With Cloudflare, you can effectively skip that step and get started quickly. You provide the API credentials, and we’ll use your API schemas to automatically construct a scan plan.

APIs are commonly documented using OpenAPI schemas. These schemas denote the host, method, and path (commonly, an “endpoint”) along with the expected parameters of incoming requests and resulting responses. In order to automatically build a scan plan, we must first make sense of these API specifications for any given API to be scanned.

Our scanner works by building up an API call graph from an OpenAPI document and subsequently walking it, using attacker and owner contexts. Owners create resources, attackers subsequently try to access them. Attackers are fully authenticated with their own set of valid credentials. If an attacker successfully reads, modifies or deletes an unowned resource, an authorization vulnerability is found.

Consider for example the above delivery order with ID 8821. For the server-side resource to exist, it needed to be originally created by an owner, most likely in a “genesis” POST request with no or minimal dependencies (previous necessary calls and resulting data). Modelling the API as a call graph, such an endpoint constitutes a node with no or few incoming edges (dependencies). Any subsequent request, such as the attacker’s PATCH above, then has a data dependency (the data is order_id) on the genesis request (the POST). Without all data provided, the PATCH cannot proceed.

Here we see in purple arrows the nodes in this API graph that are necessary to visit to add a note to an order via the POST /api/v1/orders/{order_id}/note/{note_id} endpoint. Importantly, none of the steps or logic shown in the diagram is available in the OpenAPI specification! It must be inferred logically through some other means, and that is exactly what our vulnerability scanner will do automatically.

In order to reliably and automatically plan scans across a variety of APIs, we must accurately model these endpoint relationships from scratch. However, two problems arise: data quality of API specifications is not guaranteed, and even functionally complete schemas can have ambiguous naming schemes. Consider a simplified OpenAPI specification for the above API, which might look like

openapi: 3.0.0

info:

title: Order API

version: 1.0.0

paths:

/api/v1/orders:

post:

summary: Create an order

requestBody:

required: true

content:

application/json:

schema:

type: object

properties:

product:

type: string

count:

type: integer

required:

- product

- count

responses:

'201':

description: Item created successfully

content:

application/json:

schema:

type: object

properties:

result:

type: object

properties:

id:

type: integer

created_at:

type: integer

errors:

type: array

items:

type: string

/api/v1/orders/{order_id}:

patch:

summary: Modify an order by ID

parameters:

- name: order_id

in: path

We can see that the POST endpoint returns responses such as

{

"result": {

"id": 8821,

"created_at": 1741476777

},

"errors": []

}

To a human observer, it is quickly evident that $.result.id is the value to be injected in order_id for the PATCH endpoint. The id property might also be called orderId, value or something else, and be nested arbitrarily. These subtle inconsistencies in OpenAPI documents of arbitrary shape are intractable for heuristics-based approaches.

Our scanner uses Cloudflare’s own Workers AI platform to tackle this fuzzy problem space. Models such as OpenAI’s open-weight gpt-oss-120b are powerful enough to match data dependencies reliably, and to generate realistic fake data where necessary, essentially filling in the blanks of OpenAPI specifications. Leveraging structured outputs, the model produces a representation of the API call graph for our scanner to walk, injecting attacker and owner credentials appropriately.

This approach tackles the problem of needing human intelligence to infer authorization and data relationships in OpenAPI schemas with artificial intelligence to do the same. Structured outputs bridge the gap from the natural language world of gpt-oss back to machine-executable instructions. In addition to Workers AI solving the planning problem, self-hosting on Workers AI means our system automatically benefits from Cloudflare’s highly available, globally distributed architecture.

Building a vulnerability scanner that customers will trust with their API credentials demands proven infrastructure. We did not reinvent the wheel here. Instead, we integrated services that have been validated and deployed across Cloudflare for two crucial components of our scanner platform: the scanner’s control plane and the scanner’s secrets store.

The scanner's control plane integrates with Temporal for Scan Orchestration, on which other internal services at Cloudflare already rely. The complexity of the numerous test plans executed in each Scan is effectively managed by Temporal's durable execution framework.

The entire backend is written in Rust, which is widely adopted at Cloudflare for infrastructure services. This lets us reuse internal libraries and share architectural patterns across teams. It also positions our scanner for potential future integration with other Cloudflare systems like FL2 or our test framework Flamingo – enabling scenarios where scanning could coordinate more tightly with edge request handling or testing infrastructure.

Scanning for broken authentication and broken authorization vulnerabilities requires handling API user credentials. Cloudflare takes this responsibility very seriously.

We ensure that our public API layer has minimal access to unencrypted customer credentials by using HashiCorp's Vault Transit Secret Engine (TSE) for encryption-as-a-service. Immediately upon submission, credentials are encrypted by TSE—which handles the encryption but does not store the ciphertext—and are subsequently stored on Cloudflare infrastructure.

Our API is not authorized to decrypt this data. Instead, decryption occurs only at the last stage when a TestPlan makes a request to the customer's infrastructure. Only the Worker executing the test is authorized to request decryption, a restriction we strengthen using strict typing with additional safety rails inside Rust to enforce minimal access to decryption methods.

We further secure our customers’ credentials through regular rotation and periodic rewraps using TSE to mitigate risk. This process means we only interact with the new ciphertext, and the original secret is kept unviewable.

We are releasing BOLA vulnerability scanning starting today as an Open Beta for all API Shield customers, and are working on future API threat scans for future release. Via the Cloudflare API, you can trigger scans, manage configuration, and retrieve results programmatically to integrate directly into your CI/CD pipelines or security dashboards. For API Shield Customers: check the developer docs to start scanning your endpoints for BOLA vulnerabilities today.

We are starting with BOLA vulnerabilities because they are the hardest API vulnerability to solve and the highest risk for our customers. However, this scanning engine is built to be extensible.

In the near future, we plan to expand the scanner’s capabilities to cover the most popular of the OWASP Web Top 10 as well: classic web vulnerabilities like SQL injection (SQLi) and cross-site scripting (XSS). To be notified upon release, sign up for the waitlist here, and you’ll be first to learn when we expand the engine to general web application vulnerabilities.