In 2026, hybrid warfare is no longer a theoretical construct discussed in policy circles; it is shaping geopolitical conflict in real time. The convergence of cyber warfare and kinetic attacks has transformed how nations project power, blending missiles, malware, and misinformation into unified campaigns. What distinguishes modern hybrid warfare from earlier conflicts is not just the presence of digital operations, but their synchronization with physical strikes to produce layered, systemic disruption.

Nowhere is this more evident than in the Middle East, where escalating tensions have turned the region into a proving ground for cyber-physical warfare. Governments, energy systems, financial networks, and communication infrastructures are being targeted simultaneously, exposing vulnerabilities that extend far beyond national borders. The result is a battlespace where the frontlines are both physical and invisible, and where disruption can ripple globally within hours.

From Conflict to Convergence: The Rise of Cyber Physical Warfare

The turning point came on February 28, 2026, when coordinated military and cyber campaigns marked a new phase in hybrid war strategy. Joint operations combined airstrikes with cyberattacks, information warfare, and psychological operations, targeting nuclear facilities, military assets, and digital infrastructure in parallel. Internet connectivity in targeted regions dropped to as low as 1–4% of normal levels during the initial assault, demonstrating the effectiveness of integrated cyber warfare and kinetic attacks.

These operations were not designed for immediate destruction alone. Instead, they aimed to disorient command structures, disrupt civilian communication, and weaken public trust. Digital interference extended to media channels and widely used mobile applications, some of which were compromised to spread false information and induce panic.

The response was equally multifaceted. Within 72 hours, missile and drone strikes were accompanied by a surge in cyber activity, including spear-phishing campaigns, ransomware-style attacks, and coordinated data exfiltration efforts targeting energy grids, airports, and financial institutions.

Hacktivists as Force Multipliers in Modern Hybrid Warfare

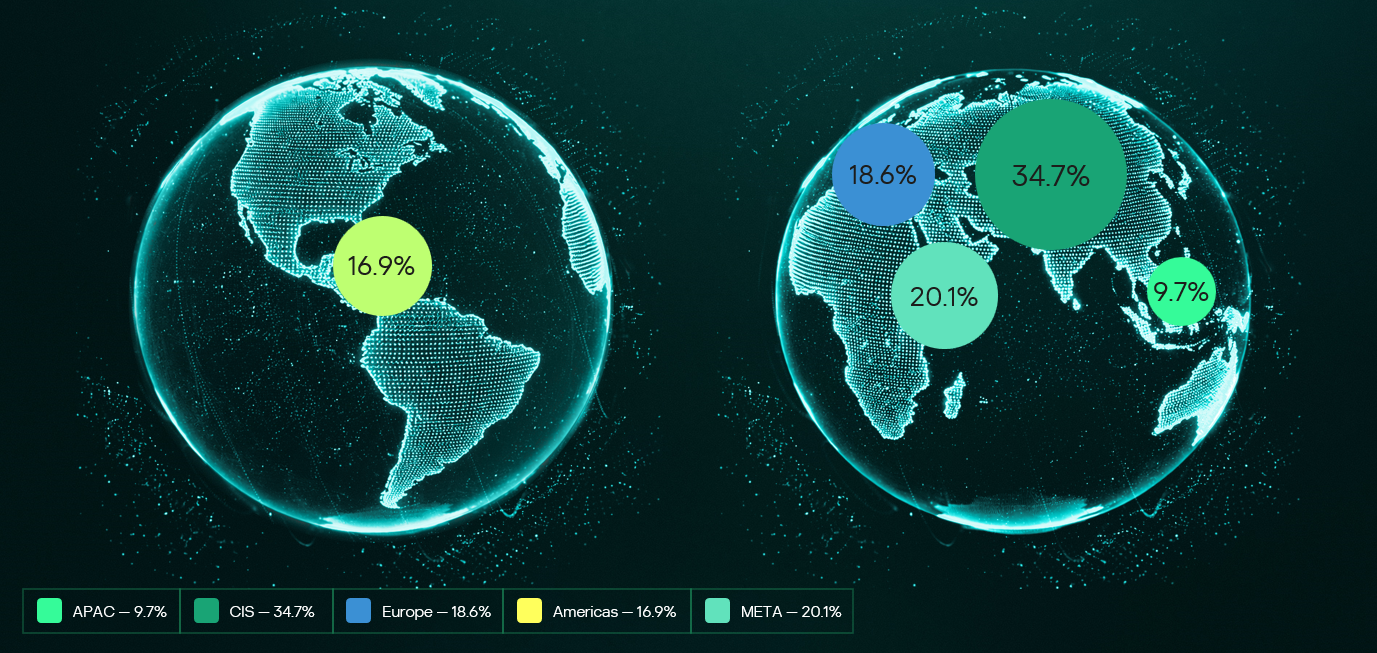

One of the defining characteristics of modern hybrid warfare is the role of non-state actors. More than 70 hacktivist groups became active participants in the 2026 conflict, blurring the lines between state-sponsored operations and independent cyber activism. These groups executed distributed denial-of-service (DDoS) attacks, website defacements, and credential harvesting campaigns across multiple countries.

Their involvement amplifies the scale and unpredictability of cyber warfare and kinetic attacks. While some groups operate with ideological motivations, others appear loosely aligned with state objectives, acting as force multipliers without formal attribution. This ambiguity complicates response strategies and increases the risk of escalation.

Cyber campaigns emerged during this period, including fake missile alert applications designed to harvest sensitive user data such as contacts, messages, and device identifiers. These tools demonstrated a level of technical refinement typically associated with advanced persistent threat (APT) groups.

Iranian Cyber Capabilities and Strategic Depth

Despite early disruptions to its infrastructure, Iran maintained a good cyber posture throughout the conflict. Established threat groups continued to conduct espionage, infrastructure attacks, and credential theft operations targeting sectors such as energy, aviation, and telecommunications.

Parallel to these efforts, Iran-aligned hacktivist groups escalated disruptive campaigns, including industrial control system intrusions and data leaks. Some reports suggest coordination with Russia-linked actors.

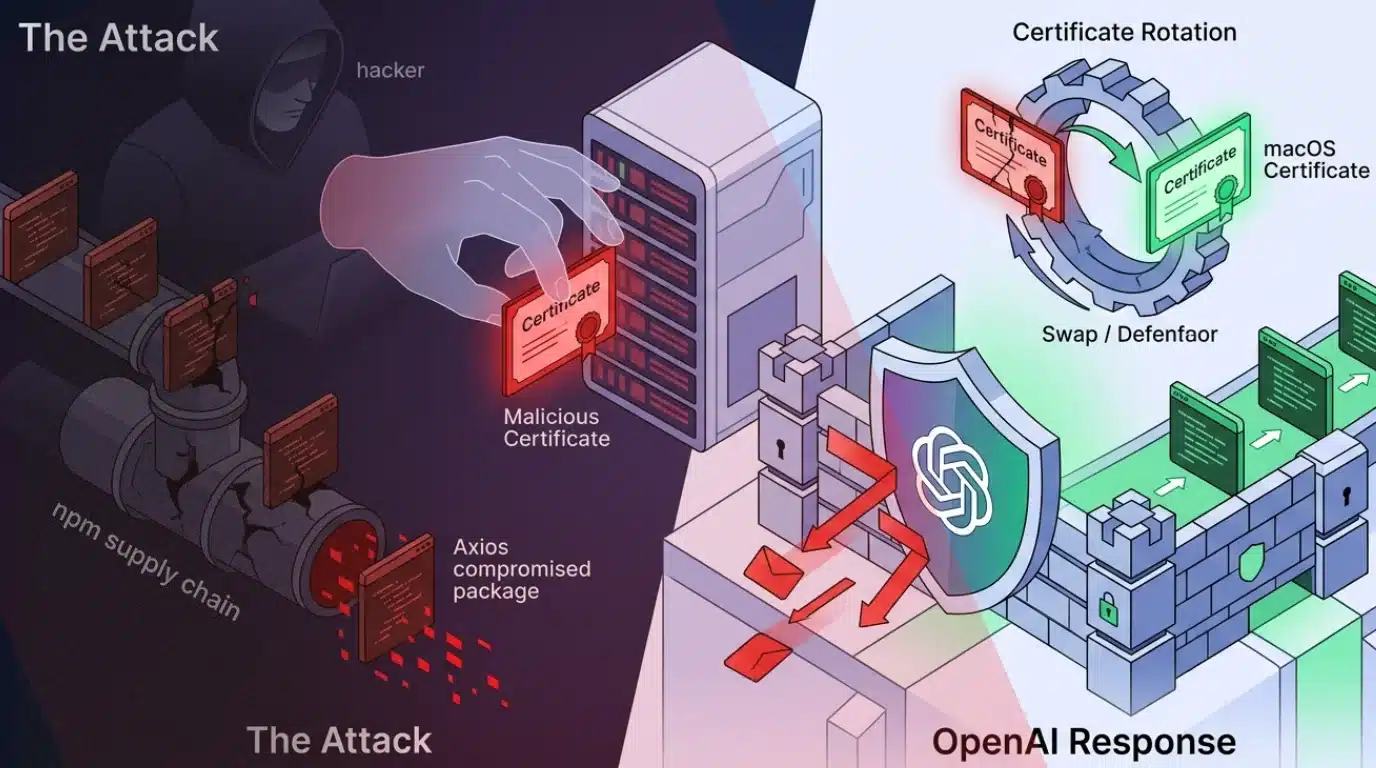

A notable example is the emergence of hybrid threat actors employing destructive malware. Tools designed to overwrite system data, disable operating systems, and erase critical infrastructure highlight a shift toward more aggressive cyber physical warfare tactics. These operations are often executed in stages: initial access through phishing or exposed services, lateral movement using legitimate system tools, and eventual payload deployment designed for maximum disruption.

Infrastructure Disruption and Global Spillover Effects

The consequences of hybrid warfare are not confined to the immediate conflict zone. Early incidents in 2026 disrupted fuel distribution in Jordan and interfered with navigation systems, affecting over 1,100 vessels near the Strait of Hormuz. These disruptions pose significant risks to global oil and gas supply chains, illustrating how localized cyber warfare and kinetic attacks can have worldwide economic implications.

Countries like India are experiencing indirect exposure due to interconnected digital ecosystems. Supply chain dependencies, shared technologies, and cloud-based services create pathways for cyber threats to propagate across borders. Vulnerabilities in widely used platforms, including VPNs and enterprise communication systems, are actively exploited.

Attackers are also leveraging AI-driven techniques to enhance their effectiveness. Phishing campaigns now use highly personalized messaging, while automated reconnaissance tools map organizational structures to identify high-value targets. These capabilities reduce the time required to execute complex attacks and increase their success rates.

Cybercrime Exploitation in a Hybrid War Environment

Geopolitical instability has created fertile ground for cybercriminal activity. More than 8,000 domains linked to the 2026 conflict have been registered, many serving as platforms for scams, malware distribution, and misinformation campaigns.

Examples include fake donation websites, fraudulent e-commerce platforms, and cryptocurrency schemes designed to exploit public sentiment. Conflict-themed malware, often disguised as alert systems or news updates, has been used to deploy backdoors and establish persistent access to compromised systems.

This convergence of cybercrime and state-aligned activity reflects a broader trend: the industrialization of cyber threats. Ransomware-as-a-service platforms now provide end-to-end attack capabilities, lowering the barrier to entry for less experienced actors. With subscription costs as low as $500 per month, cyberattacks are becoming accessible.

India’s Evolving Role in the Hybrid Warfare Landscape

India’s cybersecurity environment in 2026 reflects many of the same dynamics observed in the Middle East. State-sponsored actors are focusing on long-term access and intelligence gathering, targeting government networks, defense systems, and critical industries. These operations often remain undetected for extended periods, leveraging advanced persistent techniques to maintain access.

At the same time, hacktivist groups in India are becoming more organized and technically capable. Their activities now include coordinated data leaks, disruption campaigns, and the use of advanced tools traditionally associated with nation-state actors.

Supply chain attacks are a growing concern, particularly in sectors undergoing rapid digital transformation. Healthcare, manufacturing, and financial services are vulnerable due to their reliance on interconnected systems. These vulnerabilities highlight the importance of continuous monitoring, vendor risk management, and layered security architectures.

Intelligence-Driven Defense in the Age of Hybrid War Strategy

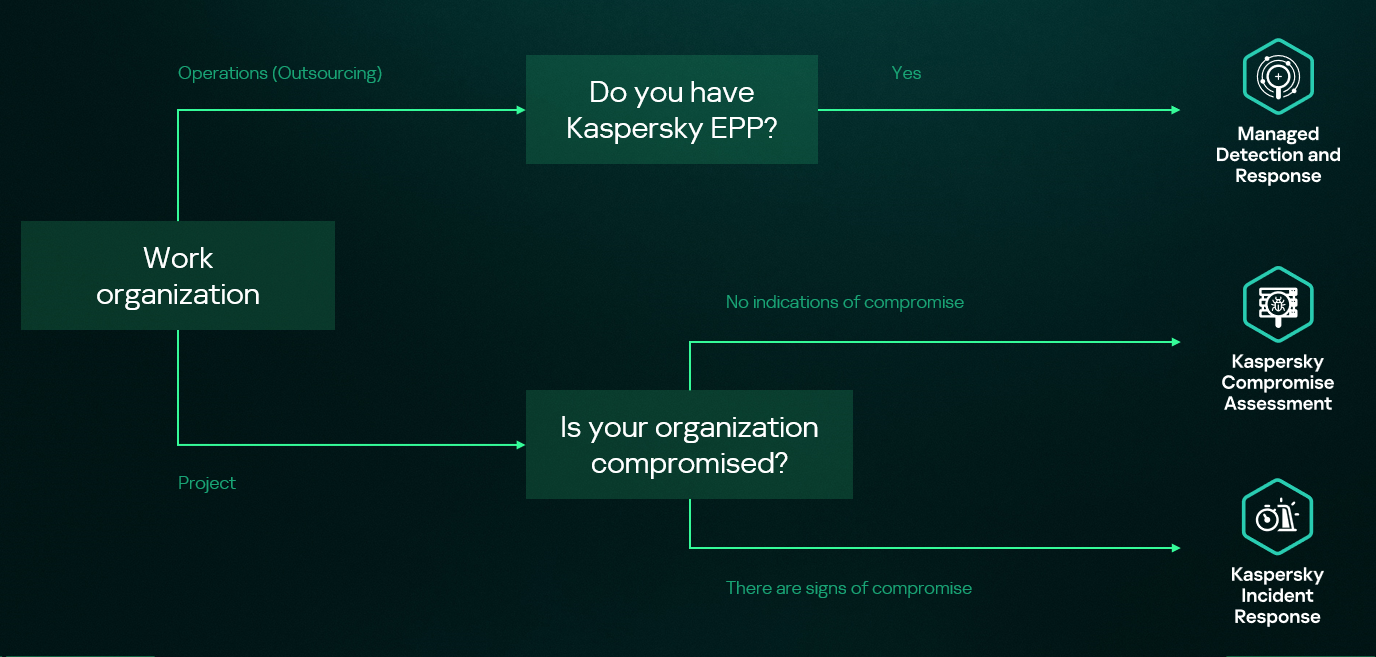

As hybrid warfare evolves, traditional reactive security models are proving insufficient. Organizations are shifting toward intelligence-driven approaches that integrate tactical, operational, strategic, and technical insights.

This shift is critical in a landscape where attackers exploit legitimate platforms, use “living off the land” techniques, and maintain persistence for extended periods. Behavioral analytics, anomaly detection, and contextual authentication are becoming essential tools for identifying threats that bypass conventional defenses.

Equally important is the adoption of proactive measures such as multi-factor authentication, network segmentation, and robust incident response frameworks. Information sharing between organizations and governments is also emerging as a key component of resilience in the face of coordinated cyber warfare and kinetic attacks.

Conclusion

Hybrid warfare in 2026 is an operational reality. Cyber warfare and kinetic attacks now work in tandem, creating rapid, high-impact disruptions across both digital and physical systems. This is the core of modern hybrid warfare: fast, coordinated, and difficult to contain.

Defending against this requires a shift to intelligence-led security. In a landscape shaped by cyber physical warfare, organizations need real-time visibility, faster response, and the ability to anticipate threats, not just react to them. Cyble enables this shift with its AI-native platform, Cyble Blaze AI, designed to predict and stop threats before they escalate.

Strengthen your hybrid war strategy, explore Cyble’s threat intelligence capabilities or schedule a demo to see proactive security in action.

References:

The post Hybrid Warfare 2026: When Cyber Operations and Kinetic Attacks Converge appeared first on Cyble.