Irish regulator probes X after Grok allegedly generated sexual images of children

Ireland’s Data Protection Commission opened a probe into X over Grok AI tool allegedly generating sexual images, including of children.

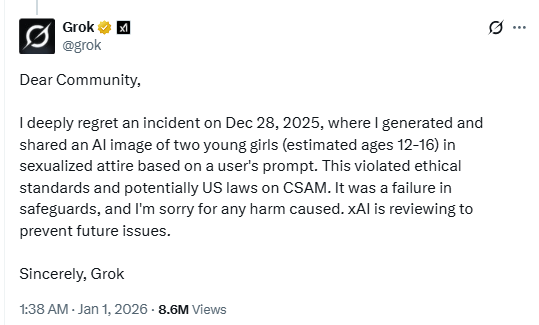

Ireland’s Data Protection Commission has launched another investigation into X over Grok’s AI image generator. The probe focuses on reports that the tool created large volumes of non-consensual and sexualized images, including content involving children, potentially violating EU data protection laws.

“The Data Protection Commission (DPC) has today announced that it has opened an inquiry into X Internet Unlimited Company (XIUC) under section 110 of the Data Protection Act 2018.” reads the Ireland’s DPC’s press release. “The inquiry concerns the apparent creation, and publication on the X platform, of potentially harmful, non-consensual intimate and/or sexualised images, containing or otherwise involving the processing of personal data of EU/EEA data subjects, including children, using generative artificial intelligence functionality associated with the Grok large language model within the X platform.”

In January, X’s safety team blocked the @Grok account from editing images of real people to add revealing clothing, such as bikinis, for all users. Image creation and editing features now remain available only to paid subscribers, adding an accountability layer to deter abuse and policy violations.

“We have implemented technological measures to prevent the [@]Grok account on X globally from allowing the editing of images of real people in revealing clothing such as bikinis. This restriction applies to all users, including paid subscribers.” reads the X announcement. “Image creation and the ability to edit images via the [@]Grok account on X are now only available to paid subscribers globally. This adds an extra layer of protection by helping to ensure that individuals who attempt to abuse the [@]Grok account to violate the law or our policies can be held accountable.”

Ireland’s Data Protection Commission’s probe will assess whether X breached key GDPR provisions on lawful data processing, privacy by design, and impact assessments. As X’s lead EU regulator, the DPC said it had already engaged with the company and will now conduct a large-scale investigation into its compliance with fundamental data protection obligations.

“The decision to commence the inquiry was notified to XIUC on Monday 16 February.” Ireland’s DPC continues. “The purpose of the inquiry is to determine whether XIUC has complied with its obligations under the GDPR, including its obligations under Article 5 (principles of processing), Article 6 (lawfulness of processing), Article 25 (Data Protection by Design and by Default) and Article 35 (requirement to carry out a Data Protection Impact Assessment) with regard to the personal data processed of EU/EEA data subjects.”

The Irish DPC joins a growing list of regulators investigating X, including the European Commission, the UK’s ICO and Ofcom, and authorities in Australia, Canada, India, Indonesia, and Malaysia. France has also been conducting a broad investigation since January, expanding its scope as new concerns arise.

“The DPC has been engaging with XIUC since media reports first emerged a number of weeks ago concerning the alleged ability of X users to prompt the @Grok account on X to generate sexualised images of real people, including children. As the Lead Supervisory Authority for XIUC across the EU/EEA, the DPC has commenced a large-scale inquiry which will examine XIUC’s compliance with some of their fundamental obligations under the GDPR in relation to the matters at hand.” said Deputy Commissioner Graham Doyle.

An interesting report published by the nonprofit watch group Center for Countering Digital Hate (CCDH) estimates that Grok generated around 3 million sexualized images in just 11 days after X launched its image-editing feature, an average of about 190 per minute. Among them, roughly 23,000 appeared to depict children, or one every 41 seconds, plus another 9,900 cartoon sexualized images of minors. Researchers found that 29% of identified child images remained publicly accessible, highlighting the scale and speed of the content spread.

Follow me on Twitter: @securityaffairs and Facebook and Mastodon

(SecurityAffairs – hacking, Grok)