Announcing Cloudflare Account Abuse Protection: prevent fraudulent attacks from bots and humans

Today, Cloudflare is introducing a new suite of fraud prevention capabilities designed to stop account abuse before it starts. We've spent years empowering Cloudflare customers to protect their applications from automated attacks, but the threat landscape has evolved. The industrialization of hybrid automated-and-human abuse presents a complex security challenge to website owners. Consider, for instance, a single account that’s accessed from New York, London, and San Francisco in the same five minutes. The core question in this case is not “Is this automated?” but rather “Is this authentic?”

Website owners need the tools to stop abuse on their website, no matter who it’s coming from.

During our Birthday Week in 2024, we gifted leaked credentials detection to all customers, including everyone on a Free plan. Since then, we've added account takeover detection IDs as part of our bot management solution to help identify bots attacking your login pages.

Now, we’re combining these powerful tools with new ones. Disposable email check and email risk help you enforce security preferences for users who sign up with throwaway email addresses, a common tactic for fake account creation and promotion abuse, or whose emails are deemed risky based on email patterns and infrastructure. We’re also thrilled to introduce Hashed User IDs — per-domain identifiers generated by cryptographically hashing usernames — that give customers better insight into suspicious account activity and greater ability to mitigate potentially fraudulent traffic, without compromising end user privacy.

The new capabilities we’re announcing today go beyond automation, identifying abusive behavior and risky identities among human users and bots. Account Abuse Protection is available in Early Access, and any Bot Management Enterprise customer can use these features at no additional cost for a limited period, until the general availability of Cloudflare Fraud Prevention later this year. If you want to learn more about this Early Access capability, sign up here.

The barrier to entry for fraudulent behavior is dangerously low, especially with the availability of massive datasets and access to automated tools that commit account fraud at scale. Website owners aren’t just dealing with individual hackers, but industrialized fraud. Last year, we highlighted how 41% of logins across our network use leaked credentials. This number has only grown following the exposure of a database holding 16 billion records, and multiple high-profile breaches have since come to light.

What’s more, users reuse passwords across multiple platforms, meaning a single leak from years ago can still unlock a high-value retail or even a bank account today. Our leaked credential check is a free feature that checks whether a password has been leaked in a known data breach of another service or application on the Internet. This is a privacy-preserving credential checking service that helps protect our users from compromised credentials, meaning Cloudflare performs these checks without accessing or storing plaintext end user passwords. Passwords are hashed — i.e., converted into a random string of characters using a cryptographic algorithm — for the purpose of comparing them against a database of leaked credentials. If you haven’t already turned on our leaked credential check, enable it now to keep your accounts safe from easy hacks!

Access to a large database of leaked credentials is only useful if an attacker can cycle through them quickly across many sites to identify which accounts are still vulnerable due to password reuse. In our Black Friday analysis in 2024, we observed that more than 60% of traffic to login pages across our network was automated. That’s a lot of bots trying to break in.

To help customers protect their login endpoints from constant bombardment, we added account takeover (ATO)-specific detections to highlight suspicious traffic patterns. This is part of our recent focus on per-customer detections, in which we provide behavioral anomaly detection unique to each bot management customer. Today, bot management customers can see and mitigate attempted ATO attacks in their login requests directly on the Security analytics dashboard.

In the card on the left within the Security analytics dashboard, you can view and address attempted account takeover attacks.

In the last week, our ATO detections combined caught an average of 6.9 billion suspicious login attempts daily, across our network. These ATO detections, along with the many other detection mechanisms in our bot management solution, create a layered defense against ATO and other malicious automated attacks.

To discern automation, or to discern intent and identity? That is the question. Our answer: yes and yes, as both are critical layers of a robust security posture. Attackers now operate at a scale previously reserved for enterprise services: they leverage massive credential leaks, use human-powered fraud farms to spoof devices and locations, and create synthetic identities to maintain thousands — even millions — of fake accounts for promotion and platform abuse. A human being with automated tools could be draining accounts, abusing promotions, committing payment fraud, or all of the above.

Beyond that, automation is accessible like never before, particularly as users become better acquainted with using AI agents and even long-standing, “traditional” browsers move toward having agentic capabilities by default. Whether it’s a lone actor using an AI agent or a coordinated fraud campaign, the threat isn’t as simple as a single script — it can involve human intent, with automated execution.

Consider the following scenarios we’ve heard from our customers:

We have 1,000 new users this month, but more than half of them are fake identities who benefit from a free trial, then disappear.

The attacker logged in with the correct password, so how do I know that it isn’t the real user?

This entity is acting at human pace, and they are draining accounts.

These problems can't be solved by only assessing automation; they require checking for authenticity and integrity. This is the gap that our dedicated fraud prevention capabilities address.

Let’s start by assessing the earliest point of potential account abuse: account creation. Fake or bulk account creation is one of the biggest topics in conversations about website fraud, as it can open the door for attackers to access an application — or even an entire business model.

Cloudflare is giving customers the tools to assess suspicious account creation at the source in two ways:

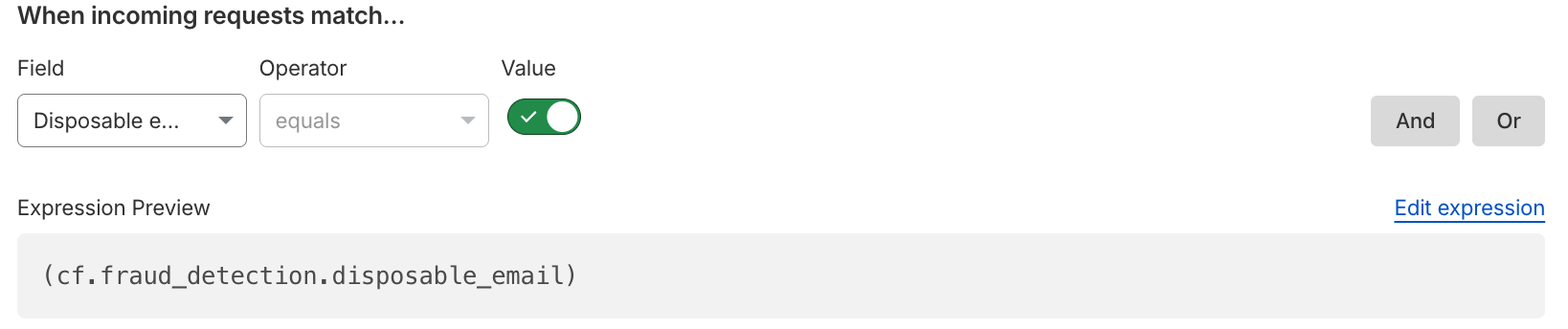

Disposable email check: Detect when users sign up with disposable, or throwaway, email addresses commonly used for promotion abuse and fake account creation. These disposable email services allow attackers to spin up thousands of "unique" accounts without maintaining real infrastructure, particularly unauthenticated disposable emails that provide instant access without account creation or free unlimited email aliases. Customers can use this binary field as they build rules to enforce security preferences, choosing to block all disposable emails outright, or perhaps issuing a challenge to anyone attempting to create an account with a disposable email.

Email risk: Cloudflare analyzes email patterns and infrastructure to provide risk tiers (low, medium, high) that customers can use in security rules. We know that not all email addresses are created equal; an address with the format

firstname.lastname@knowndomain.comcarries different risk characteristics thanxk7q9m2p@newdomain.xyz. Email risk tiers allow customers to express their tolerance for risk and friction at the point of account creation.

Both disposable email check and email risk are now available in security analytics and security rules, equipping website owners to protect their account creation flow. These detections address a fundamental problem: by the time an account is committing abuse, it's already too late. The website owner has already paid acquisition costs, the fraudulent user has consumed promotional credits, and remediation requires manual review. Mitigating suspicious emails means adding the appropriate friction at signup — the moment it matters most.

Understanding patterns of abuse requires visibility: not only into the network, but of account activity. Traditionally, security has meant looking through the lens of IPs and isolated HTTP requests to spot automated activity, but website owners aren’t just thinking in terms of network signals; they are also considering their users and known accounts. That’s why we’re expanding our mitigation toolbox to match the way applications are actually structured, focusing on user-based detection of fraudulent activity.

Attackers can effortlessly rotate IPs to hide their tracks. But forcing them to repeatedly generate new, credible accounts introduces massive friction, especially when combined with account creation protections. When we look past the network layer and map fraudulent actions to a given compromised or abusive account, we can spot targeted behavior tied to a single, persistent actor and put a stop to the abuse. In this way, we’re shifting the defense strategy to the account level, instead of playing whack-a-mole with rotating IP addresses and residential proxies. This means that our customers can mitigate abusive behavior based on the way their applications separate identity.

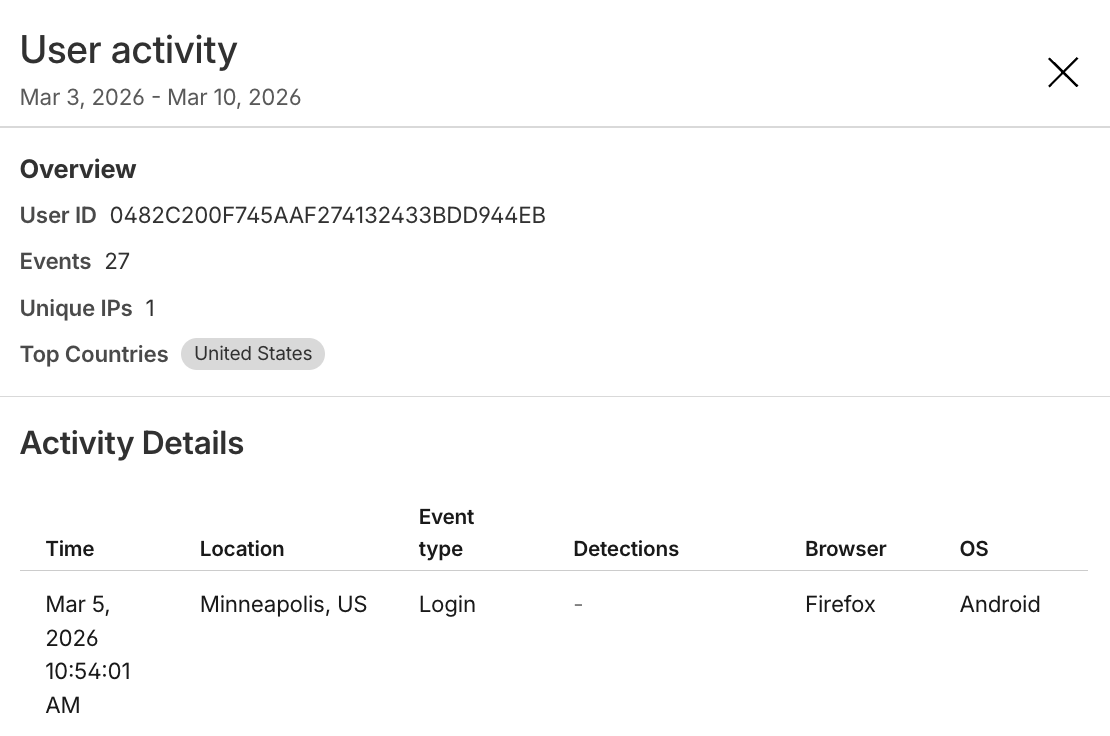

To arm website owners with this capability, Cloudflare is releasing a Hashed User ID that customers can use in Security analytics, Security rules, and Managed Transforms. User IDs are per-domain, cryptographically hashed versions of the values in the username field, and each user ID is an encrypted, unique, and stable identifier generated for a given username on a customer application. Importantly, the actual username is not logged or stored by Cloudflare as part of this service. As with leaked credentials check and ATO detections, which identify login traffic and then encrypt credentials for comparison, we are prioritizing end user privacy while empowering our customers to take action against fraudulent behavior.

With access to Hashed User IDs, website owners can:

See top users: Which accounts have the most activity?

See when a unique user logs in from a country they usually don’t — or multiple countries in one day!

Mitigate traffic based on unique user, such as blocking a user with historically suspicious activity.

Combine fields to see when accounts are being targeted with leaked credentials.

See what network patterns or signals are associated with unique users.

The expanded view of a single Hashed User ID within the Security analytics dashboard, showing the activity details of that unique user, including their login location and their browser.

This user-level visibility transforms how website owners can investigate and mitigate traffic. Instead of examining individual requests in isolation, our customers can see the full picture of how attackers are targeting and hiding among legitimate users.

If you want to learn more about this Early Access capability, sign up here. All Bot Management Enterprise customers are eligible to add these new Account Abuse Protection features today, and we’d love to open the conversation with any and all prospective Bot Management customers.

While bot detections will continue to answer the question of automation and intent, fraud detections delve into the question of authenticity. Together, they give website owners comprehensive tools to fight against the full spectrum of account abuse. This suite is one step in our ongoing investment to protect the entire user journey — from account creation and login to secure checkouts and the integrity of every interaction.