How Cloudy translates complex security into human action

Today’s security ecosystem generates a staggering amount of complex telemetry. For instance, processing a single email requires analyzing sender reputation, authentication results, link behavior, infrastructure metadata, and countless other attributes. Simultaneously, Cloud access security broker (CASB) engines continuously scan SaaS environments for signals that detect misconfigurations, risky access, and exposed data.

But while detections have become more sophisticated, explanations have not always kept pace.

Security and IT teams are often aware when something is flagged, but they do not always know, at a glance, why. End users are asked to make real-time decisions about emails that may impact the entire organization, yet they are rarely given clear, contextual guidance in the moment that matters.

Cloudy changes that.

Cloudy is our LLM-powered explanation layer, built directly into Cloudflare One. It translates complex machine learning outputs into precise, human-readable guidance for security teams and end users alike. Instead of exposing raw technical signals, Cloudy surfaces the reasoning behind a detection in a way that drives informed action.

For Cloudflare Email Security, this means helping users understand why a message was flagged before they escalate it to the security operations center, or SOC. For Cloudflare CASB, it means helping administrators quickly understand the risk and remediation path for SaaS findings without having to manually assess low-level signals.

This post outlines how we are extending Cloudy across Phishnet and API CASB to improve decision making, reduce unnecessary noise, and turn complex security signals into clear, actionable insight.

When an email is analyzed by Cloudflare Email Security, it is not evaluated by a single signal or model. Instead, a wide range of machine learning models analyze different parts of the message, from sender reputation and message structure to content, links, and behavioral patterns. This model set continues to grow as our machine learning team regularly trains and deploys new detections to keep pace with evolving threats.

Based on this analysis, messages are labeled with outcomes such as Malicious, Suspicious, Spam, Bulk, or Spoof. While these detections have been effective, we consistently heard feedback from customers that it was not always clear why a message was flagged. The decision was correct, they told us — but the reasoning behind it was often opaque to both end users and security teams.

To address this, we introduced the first version of Cloudy: LLM-powered summaries for detections. These summaries translate what our machine learning models are seeing into human readable explanations. Initially, these summaries were available in the Cloudflare dashboard to help SOC teams during investigations. Over the past few months, customer feedback has confirmed that these explanations significantly improve understanding in our detections.

As we continued speaking with customers, another challenge surfaced. Our Phishnet tool allows users to submit messages to the SOC when they believe an email may be suspicious. While this empowers employees to participate in security, many SOC teams told us their queues were being flooded with submissions that turned out to be clean messages.

The result was unnecessary backlog and slower response times for emails that actually required investigation.

At the same time, customers told us that traditional security awareness training was not always enough. Users still struggled to evaluate emails in the moment, when it mattered most. They wanted more contextual guidance directly within the workflow where decisions are made.

This upgrade is designed to address both of these problems. By bringing clearer explanations and contextual education directly into Phishnet, we aim to help users make better decisions while reducing noise for SOC teams, without sacrificing security.

As organizations and attack techniques have evolved, so has the role of the end user. Modern email threats increasingly rely on social engineering, subtle impersonation, and psychological pressure which places users directly in the decision path.

In response, users are being asked to act as an additional layer of defense. However, traditional security awareness tools often fall short. Training is typically delivered through periodic sessions or simulated phishing campaigns, disconnected from real messages and real decisions. When users encounter an unfamiliar email, they are left without enough context to confidently assess risk.

This gap commonly leads to one of two outcomes. Some users submit nearly every questionable message to the SOC, creating excessive noise and slowing down investigations. Others interact with messages they should not, simply because nothing in the moment signals clear risk.

By embedding Cloudy directly into Phishnet, we close this gap.

Users receive immediate, contextual explanations that help them understand what Cloudflare is seeing and why a message may be risky. This enables users to make informed decisions at the point of interaction, reduces unnecessary escalations to the SOC, and allows security teams to focus on the messages that truly require attention.

Over time, this approach shifts users from being a source of noise to becoming an effective part of the detection and response workflow. The result: stronger email security, without adding friction or burden to security teams.

In the next month, we will be upgrading our Phishnet reporting button to extend the Cloudy summaries.

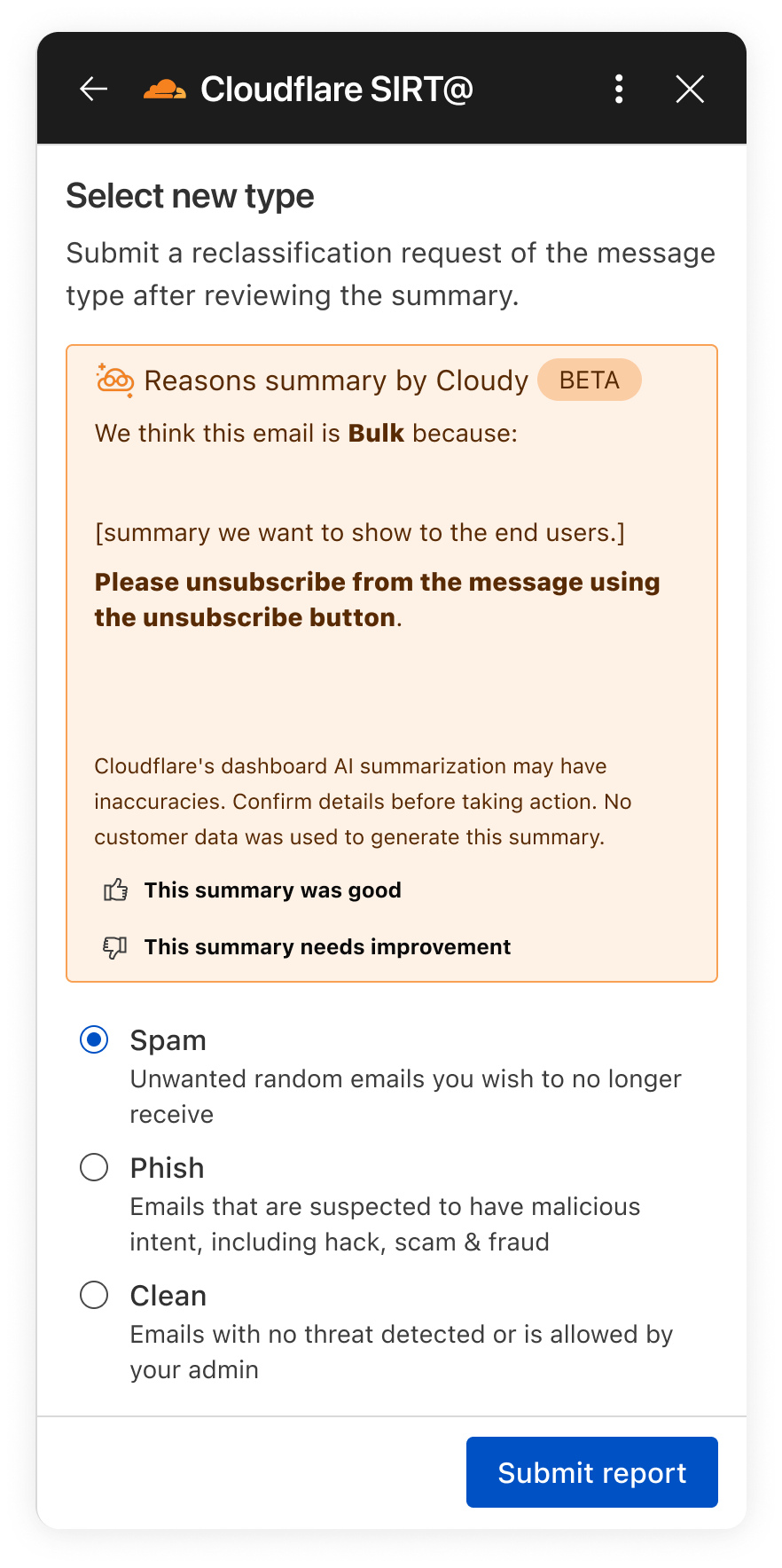

The new Phishnet screens will show Cloudy summaries.

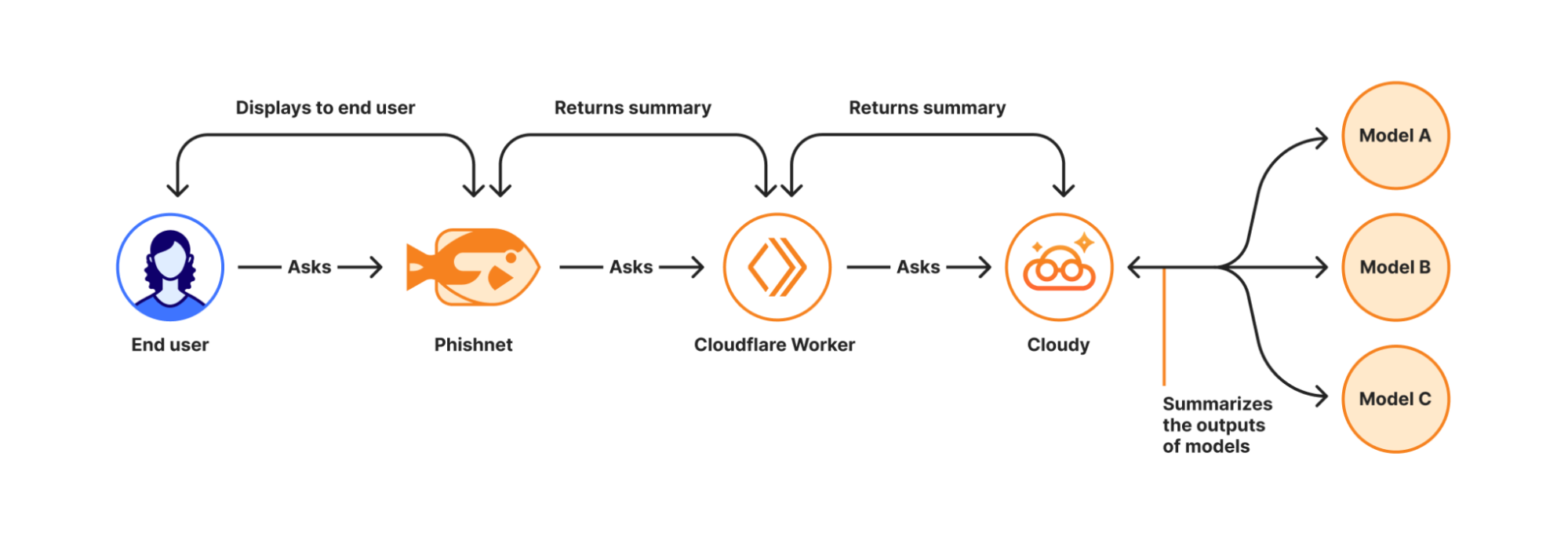

With this upgrade, end users receive a simplified, user-friendly version of Cloudy summaries at the moment they report a message. These summaries are generated in real time using Cloudflare Workers AI and run directly on Cloudflare’s global Workers platform when a user interacts with a message in Phishnet.

When a user clicks the Phishnet reporting button, the request triggers a Workers-based workflow that aggregates structured outputs from multiple detection models associated with that message. These model outputs include signals such as sender reputation, domain and infrastructure characteristics, authentication results, link and content analysis, and behavioral indicators collected during message processing.

The aggregated signals are then passed to Workers AI, where a series of purpose-built prompts generate a natural language explanation. Each prompt is designed to transform low-level detection outputs into a concise and human-readable summary. This process focuses on explanation rather than classification and does not alter the original disposition of the message.

How Cloudy transforms detections into clear explanations.

For this experience, we intentionally redesigned the summaries compared to those shown to administrators in the Cloudflare dashboard. During testing, we found that admin-focused summaries often relied on technical concepts that were difficult for non-technical users to interpret. Terms such as ASNs, IP reputation, or authentication failures required translation.

To ensure end users can understand the summaries, Phishnet emphasizes plain-language explanations while preserving the meaning of the underlying detections.

Signal | What it means | Cloudy translation for end users |

SPF Fail | Sender explicitly not authorized by SPF | This email failed a sender verification check. |

DKIM Fail | Message signature does not validate | The message integrity check failed, which can be a sign of tampering. |

DMARC Fail | DMARC policy check failed | The sender’s domain could not confirm this email is legitimate. |

Reply to Mismatch | Reply To differs from From | Replies may go to a different address than the sender shown. |

Domain Age | Domain recently registered | The sender domain is newly created, which is common in phishing. |

URL Low Reputation | Destination URL has poor reputation | The link destination has signals associated with risk. |

Because this workflow runs on the Cloudflare Workers platform, summaries are generated with low latency and at global scale — so users receive immediate feedback at the moment of interaction. This real-time context allows users to better understand why an email may be risky or why it appears safe before deciding whether to escalate it to the SOC.

We are currently beta testing this experience with Microsoft customers to ensure the summaries are accurate and reliable. Cloudy summaries are not trained on customer data. We are also applying additional validation to ensure the generated explanations do not hallucinate. Accuracy is critical at this stage as incorrect guidance could introduce real security risk.

Following the beta period, we plan to expand access to all Microsoft users. We will also bring similar upgrades to the Phishnet sidebar for Google Workspace users later in 2026.

But helping end users better understand what makes an email risky is only part of the story. We are also applying Cloudy to the administrative side of security operations, where clarity and speed matter just as much. Beyond Phishnet, Cloudy now translates complex CASB findings into structured explanations that help security and IT teams quickly understand risk, prioritize remediation, and take confident action across their SaaS environments.

Inside Cloudflare One, our SASE platform, CASB connects to the SaaS and cloud tools your teams already use. By talking to providers over API, CASB gives security and IT teams:

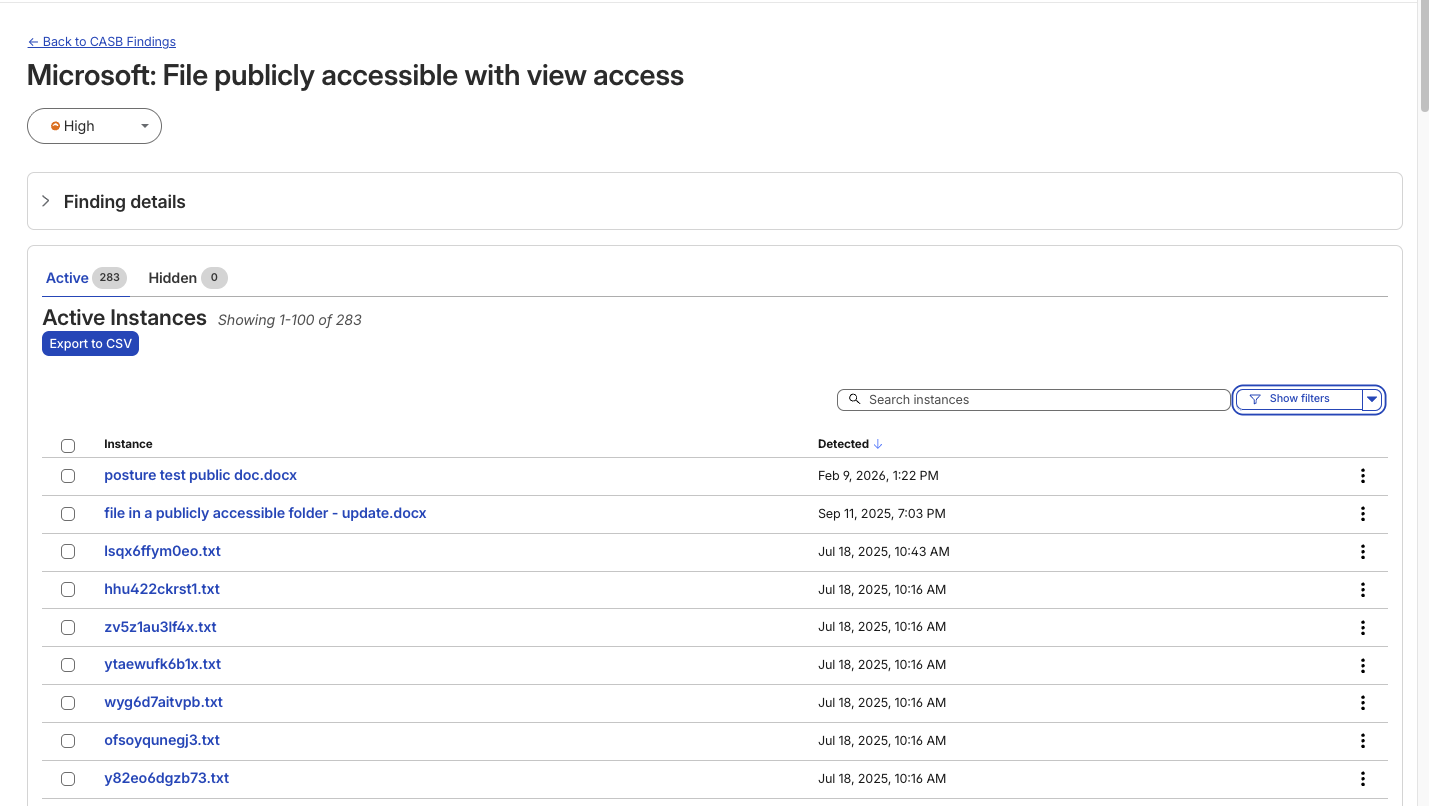

A consolidated view of misconfigurations, overshared files, and risky access patterns across apps like Microsoft 365, Google Workspace, Slack, Salesforce, Box, GitHub, Jira, and Confluence (CASB Integrations).

Continuous scanning for new issues as users collaborate, share, and adopt new tools.

Findings that are organized, searchable, and exportable for triage and reporting.

A typical CASB Findings page showing detections for a Microsoft 365 finding.

Until now, understanding what exactly triggered a CASB Finding — the detections that CASB makes across connected SaaS integrations — has been a black box. While the information was there to put together an explanation of why that file, that user, that configuration was triggering a CASB Finding Type, it wasn’t exactly obvious the reason why it was ultimately detected by our system.

With the introduction of Cloudy summaries in CASB, users receive a short description of the detection rationale with the specific details of the match listed out for easy comprehension.

Unlike a simple text summary, Cloudy for CASB provides a structured breakdown designed for immediate remediation. As seen in our beta testing across different providers, from Microsoft 365 to Dropbox, the model consistently parses findings into two distinct sections:

Risk: It identifies exactly why the finding matters. For instance, rather than just noting a 'Suspended User,' Cloudy clarifies that this 'may indicate a compromised account or a user who should no longer have access to company data'.

Guidance: It offers immediate next steps. Instead of generic advice, it suggests specific actions, such as verifying if a suspension was intentional or reviewing an application's legitimacy before revoking access.

This structure ensures that analysts can understand the gravity of a finding without needing deep expertise in the specific SaaS application involved.

An example Cloudy Summary in a CASB Posture Finding.

Finding Type | Technical Signal | Cloudy Translation (Risk & Guidance) |

Identity & Access | Dropbox: Suspended User | Risk: A suspended user account may indicate a compromised account or a user who should no longer have access to company data. Guidance: Verify that the suspension is intentional and that the user's access has been properly revoked. |

Shadow IT | Google Workspace: Installed 3rd-party app | Risk: This installed application with Google Sign In access may pose a risk of unauthorized access to user data. Guidance: Review the application's legitimacy and necessity, and consider revoking access if it is no longer needed. |

Email Security | Microsoft 365: Domain DMARC record not present | Risk: The absence of a DMARC record may leave the domain vulnerable to email spoofing and phishing attacks. Guidance: Configure a DMARC record for the domain to specify how to handle unauthenticated emails. |

Data Loss Prevention | Microsoft 365: File publicly accessible + DLP Match | Risk: This file being shared publicly with edit access may allow unauthorized modifications... especially given the potential sensitive content indicated by the DLP Profile match. Guidance: Review the file's content... and consider restricting access if necessary. |

We know that when it comes to our customers getting to the bottom of identified security issues, time is of the essence. We believe that any amount of unnecessary uncertainty or lack of clarity around what’s going wrong just puts more time between an imperfect state and one that is more secure.

We built this feature on the same privacy-first foundations as all products at Cloudflare. Cloudy summaries in CASB are generated using Cloudflare Workers AI, ensuring that your data remains within our secure infrastructure during analysis. The models are not trained on your SaaS data, and the summaries are generated ephemerally to aid in triage. This allows your team to leverage the speed of AI without exposing sensitive internal documents or configurations to public models.

For Email Security, we will continue to expand how Cloudy supports both administrators and end users. Our focus is on delivering clearer explanations, better in context guidance, and deeper integration into daily workflows.

For CASB, we’re excited to look for opportunities where Cloudy can make it even easier for CASB administrators to understand what’s going on across their cloud and SaaS apps. Keep an eye out as we look to expand Cloudy coverage to allow administrators to query their findings using natural language, further reducing the time it takes to identify and remediate risks.

Looking ahead, this includes richer explanations for additional detection types, tighter feedback loops between user actions and detections, and continued improvements to how users and SOC teams collaborate through Phishnet. Our goal is to make Cloudy a core part of how organizations understand, trust, and act on email security decisions.

We provide all organizations (whether a Cloudflare customer or not) with free access to our Retro Scan tool, allowing them to use our predictive AI models to scan existing inbox messages in Microsoft 365.

Retro Scan will detect and highlight any threats found, enabling organizations to remediate them directly in their email accounts. With these insights, organizations can implement further controls, either using Cloudflare Email Security or their preferred solution, to prevent similar threats from reaching their inboxes in the future.

If you are interested in how Cloudflare can help secure your inboxes, sign up for a phishing risk assessment here.